This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

This blog has been authored by Doug Seven, Senior Director, Microsoft Health and Life Sciences

Last week we announced the Public Preview of the new Azure Healthcare APIs – an evolution of the Azure API for FHIR, expanding support for healthcare data to include patient health data via FHIR®, medical imaging data via DICOM® and medical device data via the Azure IoT Connector for FHIR (IoT Connector) that transforms device telemetry to FHIR observations. In October 2019, Microsoft became the first cloud with a fully managed, first-party service to ingest, persist, and manage structured healthcare data in the native FHIR format with the Azure API for FHIR. Today we’re expanding our health data services to enable the exchange of multiple data types in the FHIR format. For that reason, we’re renaming our services to the Azure Healthcare APIs. I’d like to dig a little deeper into how Azure Healthcare APIs work and how you can leverage it in your health data workloads.

Azure Healthcare APIs have been created to empower new applications to leverage Protected Health Information (PHI) by enabling real-world data (RWD)—from Electronic Health Records (EHRs), claims and billing activities, patient reported outcomes (PROs), and other sources—to be collected, standardized, and accessed in one place, in a consistent way based in industry standards such as FHIR and DICOM. This enables you to derive real-world evidence (RWE), discover new insight, and improve clinical and operational outcomes by connecting these datasets end-to-end with tools for machine learning, analytics, and AI.

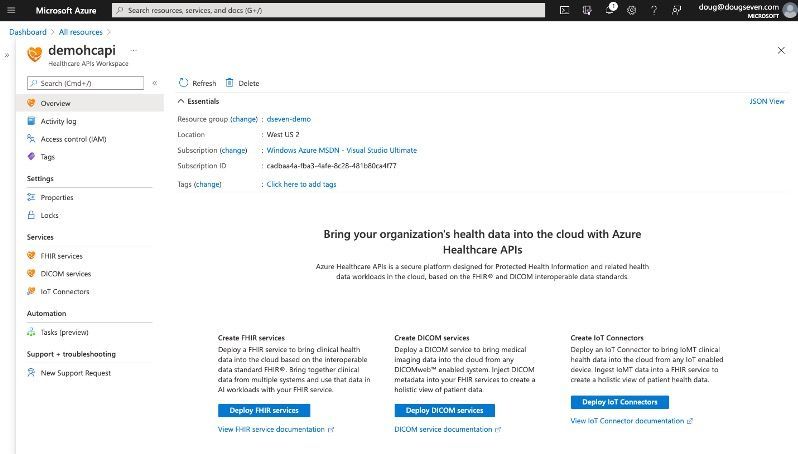

Azure Healthcare API Workspace

The workspace within Azure Healthcare APIs (Healthcare APIs) is a logical construct. It is an invisible wrapper around all your healthcare service instances, such as FHIR services, DICOM services, and IoT Connectors. The workspace also creates a compliance boundary (e.g., HIPAA, HITRUST, CCPA, GDPR) within which PHI can be managed and leveraged for new systems of engagement and systems of intelligence. Each Azure Healthcare APIs instance contains the equivalent of one workspace. The workspace enables you to provision one or more instances of the FHIR service, DICOM service or IoT Connector all within the same compliance boundary. This means that you can have a single data service (e.g., DICOM service) in the workspace, or many (e.g., 10 FHIR services, 2 DICOM services, 3 IoT Connectors). Each service instance is unique—it has its own endpoint, and the data is isolated from other instances in the same workspace—but each service instance shares common workspace-level configuration settings, such as Customer Managed Keys (CMK) for data encryption, or Role Based Access Control (RBAC) settings.

Each Azure Healthcare APIs workspace is provisioned in a single region when you create an instance of Healthcare APIs either through the Azure Portal or via a command line interface (CLI) or Infrastructure-as-Code (IaC) workflow. All service instances within the workspace are provisioned in the same region. In a future blog post I will dig into the workspace-level configuration settings and what they can enable. In the meantime, you can learn more in the workspace documentation.

Introducing DICOM

As I mentioned, Azure Healthcare APIs evolves beyond the Azure API for FHIR. One of the more notable evolutions is the introduction of the DICOM service for medical imaging data. The DICOM service within Azure Healthcare APIs is an implementation of our Medical Imaging Server for DICOM, an open-source software project available on GitHub. We have taken the Medical Imaging Server project, and converted it to an Azure service, allowing you to leave the operations of the service to us. The DICOM service enables standards-based integration with any DICOMweb™ enabled systems. The Healthcare APIs supports a subset of the DICOMweb standard including Store (STOW-RS), Retrieve (WADO-RS), Search (QIDO-RS) as well as the following non-standard API(s) Delete, Change Feed.

The Change Feed provides logs of all the changes that occur in the DICOM Service as ordered, guaranteed, immutable, and read-only logs of these changes. It offers the ability to go through the history of the DICOM Service and acts upon the creates and deletes in the service. Client applications can read these logs at any time, either in streaming, or in batch mode. The Change Feed enables you to build efficient and scalable solutions that process change events that occur in your DICOM service.

You can learn more in DICOM service documentation.

Improvements to FHIR

As we discuss the evolution of the Azure API for FHIR to the Azure Healthcare APIs, it’s worth noting some improvements we are introducing for the FHIR service. At the core, the FHIR service in the Healthcare APIs is the same as the Azure API for FHIR—in that we expose the same FHIR APIs and additional operations, like $convert-data, and de-identification with $export. We’ve made some critical infrastructure changes that allows us to support some oft requested capabilities.

Transactions: The Healthcare APIs FHIR service supports transaction bundles. A transaction (type = “transaction”) consists of a series of 0 or more entry elements in a bundle. Each entry element contains a request element which has the details of an HTTP operation that informs the system processing the transaction what to do with the entry. If the entry method is a ‘PUT’ or ‘POST’, then a resource that becomes the body of the HTTP operation is also required (e.g., Patient, Observation). For a transaction bundle, the FHIR server either accepts all actions and returns an HTTP 200 (OK) along with a response bundle or it rejects all resources and returns either an HTTP 400 for a client error (e.g., error in entry or resource) or an HTTP 500 response (e.g., error at the server).

Example transaction bundle:

{

“resourceType”: “Bundle”,

“type”: “batch”,

“entry”: [

{

“fullUrl”: “urn:uuid:f82d7301-5426-3f39-ae7b-416977c55f65”,

“resource”: {

“resourceType”: “Patient”,

“meta”: {

“profile”: [ “http://hl7.org/fhir/us/core/StructureDefinition/us-core-patient “ ]

},

“id”: “f82d7301-5426-3f39-ae7b-416977c55f65”,

},

“request”: {

“method”: “PUT”,

“url”: “Patient/f82d7301-5426-3f39-ae7b-416977c55f65”

},

},

<snip – additional entry elements would go here>

]

}

Chained Search: To perform a series of search operations that cover multiple reference parameters, you can “chain” the series of reference parameters by appending them to the server request one by one using a period (.). For example, if you want to view all DiagnosticReport resources with a subject reference to a Patient resource that includes a particular name, your chained search would be as follows:

GET [your-fhir-server]/DiagnosticReport?subject:Patient.name=Sarah

This request would return all the DiagnosticReport resources with a patient subject named “Sarah”. The period (.) after the Patient argument performs the chained search on the reference parameter of the subject parameter.

A reverse chain search allows you to perform the query the other way around; you search for resources based on the properties of resources that refer to them, using _has parameter. To find all Patient resources that are referenced by an Observation with a specific code:

GET [base]/Patient?_has:Observation:patient:code=527

This request returns Patient resources that are referred by Observation resources with the code 527. In addition, reverse chain search can have a recursive structure.

Chained search and reserve chained search are more robust on the FHIR service in Healthcare APIs compared to the Azure API for FHIR. In the Azure API for FHIR, they are limited by 1000 items per subquery.

For more on FHIR searches, see our documentation.

Connecting the Internet of Medical Things

The Azure API for FHIR introduced the concept of an IoT Connector—a construct to connect high-frequency data streams from devices (e.g., wearables, clinical equipment) to the FHIR service, by transforming the telemetry into FHIR Observations. The IoT Connector service in Healthcare APIs provides you with the mechanism to connect the Internet of Medical Things (IoMT) to Healthcare APIs.

The ingestion endpoint for device data is hosted on an Azure Event Hub, capable of receiving and processing millions of messages per second. This enables the IoT Connector service to consume messages asynchronously; thus, removing the need for devices to wait while device data gets processed. Data is retrieved from the Event Hub by the IoT Connector and processed using device mapping templates and FHIR mapping templates. This mapping process results in transforming device data into a normalized schema, such as FHIR resources (e.g., Observation). The normalization process not only simplifies data processing at later stages but also provides the ability to project one input message into multiple normalized messages. For instance, a device could send multiple vital signs for body temperature and in a single message. This input message would create two separate FHIR Observation resources. Each resource would represent a different vital sign, with the input message projected into two different normalized messages.

You can learn more about the IoT Connector in our documentation.

Gaining Insight from PHI

To make gaining insight from protected health information—either independently or in combination with other data (e.g., social determinants of health), we are leveraging the power and capabilities of Azure Synapse Analytics, which is a limitless analytics service that brings together data integration, enterprise data warehousing, and big data analytics. Using bulk export from the FHIR server, you can export PHI to either an Azure Data Lake Service Gen 2 or use the tools from the FHIR Analytics Pipelines open-source project on GitHub to create an Azure Data Factory pipeline that will move FHIR data into a Common Data Model folder. Either option enables you to load the data into Azure Synapse Analytics and begin to query the data.

The Bulk Export feature allows data to be exported from the FHIR Server per the FHIR bulk data specification. This enables you to either export all the data from the FHIR service (System export), all Patients (Patient export) or a group of Patients (Group export). Optionally you can de-identify the data on export using standard configurations for HIPAA Safe Harbor guidelines or modify the de-identification configuration to support Expert Determination guidelines.

For more information on exporting to Azure Synapse Analytics see the documentation.

Summary

Azure Healthcare APIs are the evolution of Azure API for FHIR, enabling you to bring more types of health data to the cloud and leverage the Microsoft Cloud for Healthcare to turn real-world data into real-world evidence that you can use to drive change resulting in improved clinical outcomes, improved operational outcomes and/or reduced cost of healthcare. In subsequent blog posts I will deep dive into each of the topics shared here and look at how you can take advantage of the capabilities of the Azure Healthcare APIs in your health data workloads.

Learn More about Azure Healthcare APIs

Watch a webinar introducing Azure Healthcare APIs on HIMSS Learn

FHIR® is the registered trademark of HL7 and is used with the permission of HL7.