This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

As a technology strategist for West Europe, I have had multiple discussions with different customers on IoT projects and the enablement of AI on the Edge. A big portion of those conversation would be on how to utilize Microsoft’s Azure AI models and how to deploy them on the edge, so I was excited when Azure Percept was announced with the capability to simplify Azure cognitive capabilities to the edge. I was able to get my hands on an Azure Percept Device Kit to play around with and discover it’s potential and it did not disappoint. In this blog post I wanted to give a quick tour of the device, it’s components and how you can start deploying use cases in a quick and simple manner.

What is Azure Percept?

Azure Percept is a software/hardware stack that allows you to quickly deploy modules on the edge utilizing different cognitive feeds such as Video and Audio. The applications range from Low Code/No Code using Azure cognitive services to more advanced use cases where you can write and deploy your own algorithms.

As it stands the stack consists of a software component and a hardware component.

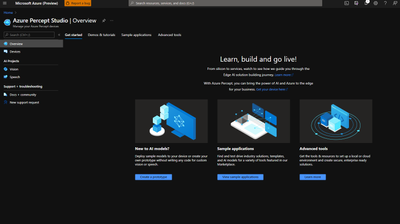

- Azure Percept Studio: is the launch point that allows you to create AI Edge models and solutions. It easily integrates your edge AI capable hardware with Azure’s suite of services such as IOT hub, cognitive services and more

- Azure Percept Devkit: is an edge AI development kit that enables you to develop audio and vision AI solutions with Azure Percept studio

Components

Azure Percept Carrier Board:

- NXP iMX8m processor

- Trusted Platform Module (TPM) version 2.0

- Wi-Fi and Bluetooth connectivity

You can check more details in Azure Percept DK datasheet

Azure Percept Vision:

- Intel Movidius Myriad X (MA2085) vision processing unit (VPU)

- RGB camera sensor

You can check more details in the Azure Percept Vision datasheet

Azure Percept Audio:

You can check more details in the Azure Percept Audio datasheet

Connect to Azure Percept Studio:

1 – Open Azure Percept Studio

2 – Head to Devices and you will be able to view the devices that are currently connected

3 – Choose your device and click on the vision tab to see the different options for the vision module

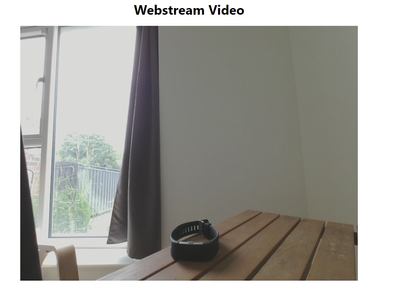

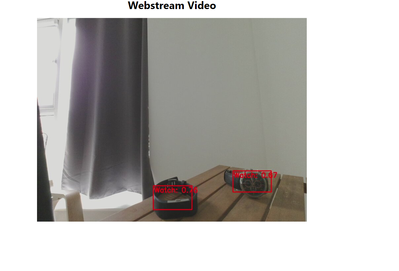

4 – Click on View your device stream and it will start streaming directly from the camera in real time (You need to have the device in the same network as the PC you are connecting from)

5 - As can be seen it was able to provide an automatic object detection and could recognize the object as my reading chair.

Deploying a Custom Vision Model:

Next, I tried the object detection model on different other objects and found that it didn’t recognise my watch, so I started working on a no code custom vision model to identify watches

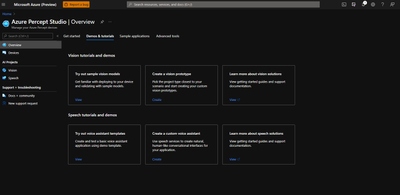

1 - Head to Overview and create a vision Prototype

2 – Fill in the details and create your prototype

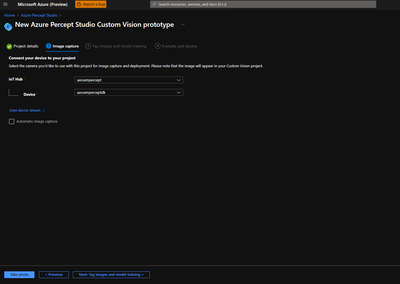

3 – In the image capture tab I was able to take photos of my watch. You have the option to take the photos manually or using automatic image capturing. I ended up taking 15 photos

4 – In the next tab open the Open Project in custom vision link and you will be directed towards your project gallery

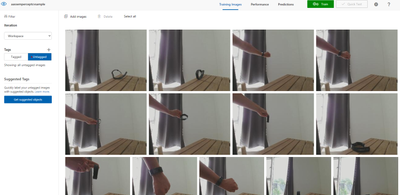

5 – Click on the untagged option to find all your saved photos

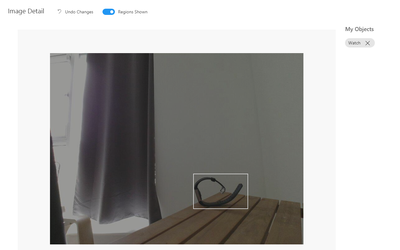

6 – Click on the photos and start tagging them

7 – Click Train to start training your Custom Vision Model

8 – Once the training has been done you will be able to review the results of your training. If satisfied you can go back towards the Azure portal

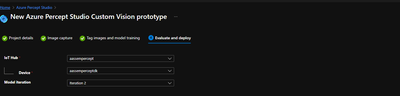

9 – Go to the final tab within the custom vision setup prototype and deploy your model

10 – Azure Percept was able to identify my watch as well as a different watch that wasn’t provided in the training data set

Impressions and next steps:

All in all, Azure Percept has been very easy to operate and to connect to Azure. Deploying the custom vision model was very streamlined and I am looking forward to discovering more and dive deeper into the world of Azure Percept. We are already as well in discussion with multiple customers on different use cases within the domain of Critical Infrastructure where Edge AI is playing a huge role in combination with other components such as Azure Digital Twins and Azure Maps which I am excited to explore over the next period.

Learn more about Azure Percept:

- You can always head to the official Azure Percept Page

- For more details on the Azure Percept Devkit you can check the Azure Percept DK overview

- For the different tutorials on Azure Percept you can check the Microsoft documentation

- If you would like to check videos you can check the IOT Show by Olivier Bloch or check Azure Percept page on YouTube.