This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

This article was written in conjunction with the MongoDB Developer Relations Team.

So you're building serverless applications with Microsoft Azure Functions, but you need to persist data to a database. What do you do about controlling the number of concurrent connections to your database from the function? What happens if the function currently connected to your database shuts down or a new instance comes online to scale with demand?

The concept of serverless in general, whether that be through a function or database, is great because it is designed for the modern application. Applications that scale on-demand reduce the maintenance overhead and applications that are pay as you go reduce unnecessary costs.

In this tutorial, we’re going to see just how easy it is to interact with MongoDB Atlas using Azure functions. If you’re not familiar with MongoDB, it offers a flexible document model that can be used to model your data for a variety of use cases and is easily integrated into most application development stacks. On top of the document model, MongoDB Atlas makes it just as easy to scale your database to meet demand as it does your Azure Function.

The language focus of this tutorial will be Node.js and as a result we will be using the MongoDB Node.js driver, but the same concepts can be carried between Azure Function runtimes.

Prerequisites

You will need to have a few of the prerequisites met prior to starting the tutorial:

- A MongoDB Atlas database deployed and configured with appropriate network rules and user rules.

- The Azure CLI installed and configured to use your Azure account.

- The Azure Functions Core Tools installed and configured.

- Node.js 14+ installed and configured to meet Azure Function requirements.

For this particular tutorial we'll be using a MongoDB Atlas serverless instance since our interactions with the database will be fairly lightweight and we want to maintain scaling flexibility at the database layer of our application, but any Atlas deployment type, including the free tier, will work just fine so we recommend you evaluate and choose the option best for your needs. It’s worth noting that you can also configure scaling flexibility for our dedicated clusters with auto-scaling which allows you to select minimum and maximum scaling thresholds for your database.

We'll also be referencing the sample data sets that MongoDB offers, so if you'd like to follow along make sure you install them from the MongoDB Atlas dashboard.

When defining your network rules for your MongoDB Atlas database, use the outbound IP addresses for the Azure data centers as defined in the Microsoft Azure documentation.

Create an Azure Functions App with the CLI

While we're going to be using the command line, most of what we see here can be done from the web portal as well.

Assuming you have the Azure CLI installed and it is configured to use your Azure account, execute the following:

az group create --name <GROUP_NAME> --location <AZURE_REGION>

You'll need to choose a name for your group as well as a supported Azure region. Your choice will not impact the rest of the tutorial as long as you're consistent throughout. It’s a good idea to choose a region closest to you or your users so you get the best possible latency for your application.

With the group created, execute the following to create a storage account:

az storage account create --name <STORAGE_NAME> --location <AZURE_REGION> --resource-group <GROUP_NAME> --sku Standard_LRS

The above command should use the same region and group that you defined in the previous step. This command creates a new and unique storage account to use with your function. The storage account won't be used locally, but it will be used when we deploy our function to the cloud.

With the storage account created, we need to create a new Function application. Execute the following from the CLI:

az functionapp create --resource-group <GROUP_NAME> --consumption-plan-location <AZURE_REGION> --runtime node --functions-version 4 --name <APP_NAME> --storage-account <STORAGE_NAME>

Assuming you were consistent and swapped out the placeholder items where necessary, you should have an Azure Function project ready to go in the cloud.

The commands used thus far can be found in the Microsoft documentation. We just changed anything .NET related to Node.js instead, but as mentioned earlier MongoDB Atlas does support a variety of runtimes including .NET and this tutorial can be referenced for other languages.

With most of the cloud configuration out of the way, we can focus on the local project where we'll be writing all of our code. This will be done with the Azure Functions Core Tools application.

Execute the following command from the CLI to create a new project:

func init MongoExample

When prompted, choose Node.js and JavaScript since that is what we'll be using for this example.

Navigate into the project and create your first Azure Function with the following command:

func new --name GetMovies --template "HTTP trigger"

The above command will create a Function titled "GetMovies" based off the "HTTP trigger" template. The goal of this function will be to retrieve several movies from our database. When the time comes, we'll add most of our code to the GetMovies/index.js file in the project.

There are a few more things that must be done before we begin writing code.

Our local project and cloud account is configured, but we’ve yet to link them together. We need to link them together, so our function deploys to the correct place.

Within the project, execute the following from the CLI:

func azure functionapp fetch-app-settings <FUNCTION_APP_NAME>

Don't forget to replace the placeholder value in the above command with your actual Azure Function name. The above command will download the configuration details from Azure and place them in your local project, particularly in the project's local.settings.json file.

Next execute the following from the CLI:

func azure functionapp fetch-app-settings <STORAGE_NAME>

The above command will add the storage details to the project's local.settings.json file.

For more information on these two commands, check out the Azure Functions documentation.

Install and Configure the MongoDB Driver for Node.js within the Azure Functions Project

Because we plan to use the MongoDB Node.js driver, we will need to add the driver to our project and configure it. Neither of these things will be complicated or time consuming to do.

From the root of your local project, execute the following from the command line:

npm install mongodb

The above command will add MongoDB to our project and add it to our project's package.json file so that it can be added automatically when we deploy our project to the cloud.

By now you should have a "GetMovies" function if you're following along with this tutorial. Open the project's GetMovies/index.js file so we can configure it for MongoDB:

const { MongoClient } = require("mongodb");

const mongoClient = new MongoClient(process.env.MONGODB_ATLAS_URI);

module.exports = async function (context, req) {

// Function logic here ...

}

In the above snippet we are importing MongoDB and we are creating a new client to communicate with our cluster. We are making use of an environment variable to hold our connection information.

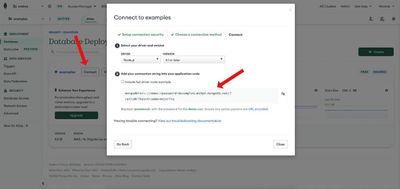

To find your URI, go to the MongoDB Atlas dashboard and click "Connect" for your cluster.

Bring this URI string into your project's local.settings.json file. Your file might look something like this:

{

"IsEncrypted": false,

"Values": {

// Other fields here ...

"MONGODB_ATLAS_URI": "mongodb+srv://demo:<password>@examples.mx9pd.mongodb.net/?retryWrites=true&w=majority",

"MONGODB_ATLAS_CLUSTER": "examples",

"MONGODB_ATLAS_DATABASE": "sample_mflix",

"MONGODB_ATLAS_COLLECTION": "movies"

},

"ConnectionStrings": {}

}

The values in the local.settings.json file will be accessible as environment variables in our local project. We'll be completing additional steps later in the tutorial to make them cloud compatible.

The first phase of our installation and configuration of MongoDB Atlas is complete!

Interact with Your Data using the Node.js Driver for MongoDB

We're going to continue in our projects GetMovies/index.js file, but this time we're going to focus on some basic MongoDB logic.

In the Azure Function code we should have the following as of now:

const { MongoClient } = require("mongodb");

const mongoClient = new MongoClient(process.env.MONGODB_ATLAS_URI);

module.exports = async function (context, req) {

// Function logic here ...

}

When working with a serverless function you don't have control as to whether or not your function is available immediately. In other words you don't have control as to whether the function is ready to be consumed or if it has to be created. The point of serverless is that you're using it as needed.

We have to be cautious about how we use a serverless function with a database. All databases, not specific to MongoDB, can maintain a certain number of concurrent connections before calling it quits. In a traditional application you generally establish a single connection that lives on for as long as your application does. Not the case with an Azure Function. If you establish a new connection inside your function block, you run the risk of too many connections being established if your function is popular. Instead what we're doing is we are creating the MongoDB client outside of the function and we are using that same client within our function. This allows us to only create connections if connections don't exist.

Now we can skip into the function logic:

module.exports = async function (context, req) {

try {

const database = await mongoClient.db(process.env.MONGODB_ATLAS_DATABASE);

const collection = database.collection(process.env.MONGODB_ATLAS_COLLECTION);

const results = await collection.find({}).limit(10).toArray();

context.res = {

"headers": {

"Content-Type": "application/json"

},

"body": results

}

} catch (error) {

context.res = {

"status": 500,

"headers": {

"Content-Type": "application/json"

},

"body": {

"message": error.toString()

}

}

}

}

When the function is executed, we make reference to the database and collection we plan to use. These are pulled from our local.settings.json file when working locally.

Next we do a `find` operation against our collection with an empty match criteria. This will return all the documents in our collection so the next thing we do is limit it to ten (10) or less results.

Any results that come back we use as a response. By default the response is plaintext, so by defining the header we can make sure the response is JSON. If at any point there was an exception, we catch it and return that instead.

Want to see what we have in action?

Execute the following command from the root of your project:

func start

When it completes, you'll likely be able to access your Azure Function at the following local endpoint: http://localhost:7071/api/GetMovies

Remember, we haven't deployed anything and we're just simulating everything locally.

If the local server starts successfully, but you cannot access your data when visiting the endpoint, double check that you have the correct network rules in MongoDB Atlas. Remember, you may have added the Azure Function network rules, but if you're testing locally, you may be forgetting your local IP in the list.

Deploy an Azure Function with MongoDB Support to the Cloud

If everything is performing as expected when you test your function locally, then you're ready to get it deployed to the Microsoft Azure cloud.

We need to ensure our local environment variables make it to the cloud. This can be done through the web dashboard in Azure or through the command line. We're going to do everything from the command line.

From the CLI, execute the following commands, replacing the placeholder values with your own values:

az functionapp config appsettings set --name <FUNCTION_APP_NAME> --resource-group <RESOURCE_GROUP_NAME> --settings MONGODB_ATLAS_URI=<MONGODB_ATLAS_URI>

az functionapp config appsettings set --name <FUNCTION_APP_NAME> --resource-group <RESOURCE_GROUP_NAME> --settings MONGODB_ATLAS_DATABASE=<MONGODB_ATLAS_DATABASE>

az functionapp config appsettings set --name <FUNCTION_APP_NAME> --resource-group <RESOURCE_GROUP_NAME> --settings MONGODB_ATLAS_COLLECTION=<MONGODB_ATLAS_COLLECTION>

The above commands were taken almost exactly from the Microsoft documentation.

With the environment variables in place, we can deploy the function using the following command from the CLI:

func azure functionapp publish <FUNCTION_APP_NAME>

It might take a few moments to deploy, but when it completes the CLI will provide you with a public URL for your functions.

Before you attempt to test them from cURL, Postman, or similar, make sure you obtain a "host key" from Azure to use in your HTTP requests.

Conclusion

In this tutorial we saw how to connect MongoDB Atlas with Azure Functions using the MongoDB Node.js driver to build scalable serverless applications. While we didn't see it in this tutorial, there are many things you can do with the Node.js driver for MongoDB such as complex queries with an aggregation pipeline as well as basic CRUD operations.

To see more of what you can accomplish with MongoDB and Node.js, check out the MongoDB Developer Center.

With MongoDB Atlas on Microsoft Azure, developers receive access to the most comprehensive, secure, scalable, and cloud–based developer data platform in the market. Now, with the availability of Atlas on the Azure Marketplace, it’s never been easier for users to start building with Atlas while streamlining procurement and billing processes. Get started today through the Atlas on Azure Marketplace listing.