This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Mission planning is a set of techniques for executing actions on spacecraft resources and formulating tasks in accordance with requirements. Depending on the context of the mission, such as earth observation, communications, navigation, astronomical or cosmological purposes, mission planning involves the following:

- Define Objectives and on Constraints

- Define Alternative Mission Concepts and Designs

- Evaluate Alternative Mission Concepts

- Define and Allocate System Requirements1

Each mission is unique because it involves distinctive goals, constraints, optimizations, operating environments, strategies and tactics, orbits, and many other considerations. However, one thing is certain: because the primary purpose of most spacecraft is to collect data to be processed and analyzed, cloud capabilities can uniquely help with scale, performance, resilience, security, and cost efficiency. In this article, we'll discuss mission planning from multiple perspectives and show how Azure can be used to help you across all aspects of mission planning. We’ll also discuss cloud concepts, patterns, and practices as they relate to spacecraft mission planning.

Missions can involve the following:

- Single satellite, single payload

- Single satellite, multi-payload

- Multi-satellite (constellation), single payload

- Multi-satellite (constellation), multi-payload

Satellite missions could focus on a particular point or broad area on the earth’s surface or fixate on outer space for astronomical and cosmological purposes. If the spacecraft is focused on a point location, then commands must be sent to the spacecraft to point at the target, capture the data, and downlink to a ground station. If the spacecraft is meant to capture a specified area for earth observation or persistent monitoring, then the spacecraft must be commanded to capture data over that area for a designated contact time, capture the data, then downlink to a ground station upon which the individual images will be converted into a imagery mosaic. In some cases, depending on the orbit, multiple spacecraft may be needed for a mission depending on the area of interest and concept of operations.

Achieving a mission with a single satellite is the easiest of all options because coordination with other spacecraft is not required. However, there are always size, weight and power constraints to consider for even the simplest mission. Additionally, considerations need to be made for pointing the sensors at the desired location, pointing solar panels at the sun to have enough power, maintaining attitude, and more. Achieving a mission with multiple satellites is more complicated because it involves coordination and orchestration in addition to all the constraints of a single spacecraft. Either way, the mission must be well understood so that the design and construction of the spacecraft is well suited for the mission.

Tasking a satellite requires a set of algorithms whether the tasking is static or dynamic. These algorithms can be deterministic, heuristic, or search algorithms that optimize the set of tasks to perform. Ideally, these tasks are wrapped into an app which mission planners can use to create the mission, model the spacecraft, determine power usage, or determine orbital parameters. Designing the application involves many considerations. In this article, we’ll focus on the application development aspect of Mission Planning.

Application Development

Now that we’ve discussed the fundamentals of mission planning, let’s turn our attention to how this would be implemented in the cloud. All of the components we’ve talked about so far can be decomposed into software components and the code can be written with an Integrated Development Environment. Likewise, Continuous Integration, Continuous Deployment and Continuous Delivery can be established and automated while automated testing, integrated security analysis, version control, and many other techniques can be established. In the following sections, we’ll provide a high-level overview along with some guidance on how to build these components with Microsoft’s development tools and common DevOps practices.

Domain Driven Design of Mission Planning software

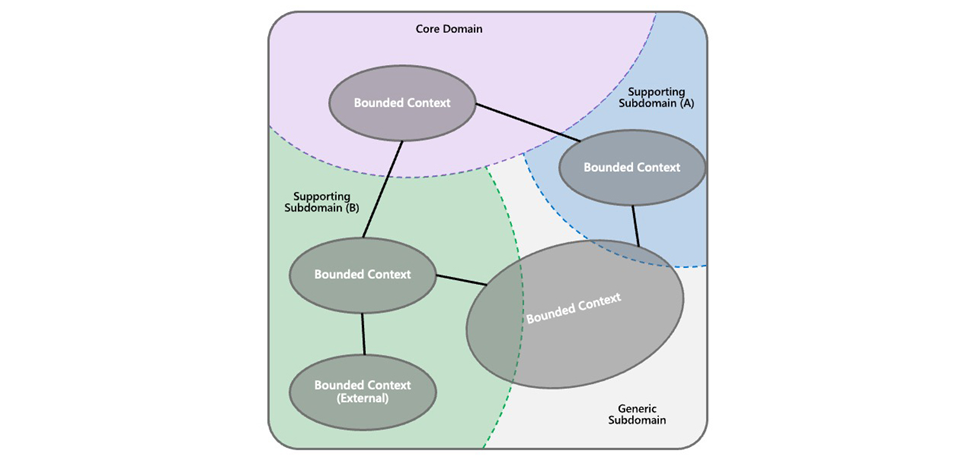

Software tenants and patterns provide a means to solve common problems. As shown in the Microsoft Well-Architected Framework (WAF), there are tenets for building a system with the following in mind: Reliability, Security, Cost Optimization, Operational Excellence, and Performance Efficiency. There are also ten design principles which aid in achieving the tenets of the WAF here. These tenets rollup to application architecture styles. One of the most common architecture patterns is the microservices architecture style. This style takes business domains based on a bounded context using Domain Driven Design (DDD). Using DDD, developers can translate the business domains into abstractions called domain models which are decomposed into small software components called microservices. These models encapsulate complex business logic which form a correspondence between the business reality and code.

Space mission planning involves several business domains. The following are examples of domains relevant to a mission planning application:

- Mission Domain

- Spacecraft Domain

- Power Domain

- Orbit Domain

- Command & Data Handling Domain

- Guidance, Navigation & Control Domain

- Communications Domain

- Propulsion Domain

- Thermal Domain

Each of these domains can be decomposed into individual microservices within each bounded context of the domain. For example, the Power Domain would contain microservices that represent solar panels, battery packs, and power processors. Each of these microservices contains properties and methods which define the service and what it can do. The next step is to define the relationships between these microservices, if any. Ideally, microservices are not coupled at all so that there are no dependencies. As such, the microservices can iterate as fast as needed. See here for more information on how to design the system using this pattern.

Azure services for Mission Planning

Now that we’ve defined what the microservices need to be, we can now turn our attention to building them. For that, we’ll need product management tools, code development tools, testing, security, a means to containerize, orchestrate, monitor, and observe the microservices, and a means to host them in the cloud.

To manage the product, we can use GitHub and/or Azure DevOps (see here). To write the code, we can use Visual Studio Code, Visual Studio or GitHub Codespaces. The choice comes down to many factors such as the development language of choice; Python, .NET, or Java are great options. A free book is available from Microsoft for using .NET here. Azure DevOps and GitHub support Continuous Integration and Continuous Deployments which allow you to automatically build the code on each commit and deploy the code into environments such as development, testing, staging or production. Further, your code is scanned for security vulnerabilities during the CI build, and the application is monitored with tools such as Azure Sentinel. Likewise, you can monitor the app with Azure Monitor, perform load testing with Azure Load Testing, and even perform chaos engineering with Azure Chaos Studio. In addition to these tools, several reference architectures are available in the Azure Architecture Center to consider:

- DevSecOps with GitHub Security

- Build and deploy on AKS using DevOps and GitOps

- Microservices with AKS and Azure DevOps

Orchestrating your microservices is another key component of any modern, cloud-native solution. Today, Kubernetes is the go-to solution for hosting, orchestrating, and monitoring these services. First, the microservices need to be containerized with Docker, Podman, or Containerd. Once containerized, the containers can be deployed to Kubernetes which is an open-source software orchestration platform. While Kubernetes offers the orchestration of microservices, it doesn’t provide the bare metal hardware. That’s where the Azure cloud comes to the rescue with a variety of compute options. Microsoft’s Azure Kubernetes Service is a platform-as-a-service offering that allows you to manage and orchestrate these services. It can be spun up in minutes from the Azure portal, Azure Command-Line Interface, and Azure PowerShell or with code via Azure Resource Manager templates, Bicep, or Terraform. The major benefit of using AKS is that you can abstract away the hardware and focus on the application development and orchestration. Once the AKS instance is running, your applications can be developed with Helm, Dapr, or your favorite language. Your microservice containers can be registered in the Azure Container Registry.

Mission Operations Center

To enable a mission, a Mission Operations Center is established to model the mission and simulate outcomes as well as manage the telemetry data, scheduling, preprocessing, backup, fault identification, and more. These functions have traditionally been managed with on-premises hardware. However, with the advent of the cloud, all this server infrastructure can instead be hosted in Azure. As noted in this article, that includes the ground station with a platform-as-a-service solution such as Azure Orbital Ground Station. As a result, all of the mission infrastructure can be hosted in the cloud thereby eliminating the need to downlink to on-premises infrastructure which reduces latency. The data is downlinked to an Azure region where the mission planning and other apps can also be hosted.

Visualization, Simulation and Digital Twins

Another key component of mission planning is the visualization of all the components of the mission to communicate complex mission components, improve collaboration, determine unseen constraints, improve operational decision making, understand CONOPS, and reduce risk and cost. Typically, this is accomplished with a mission simulation. The simulation includes models, inputs, outputs, and observation types in the case of earth observation. The main models include energy, time utilization, system performance, scheduling, background characteristics, search logic, and data utilization. Inputs include scenarios, tasking, system parameters, and constellation parameters. Outputs include animation sequence, observation data, system parameters, energy usage, pointing statistics, time usage, gap statistics, response times, and cloud cover. The simulation is attuned with a baseline configuration then run multiple times to determine alternative outcomes to help us understand whether we need to revise the requirements, evaluate alternatives, or change our cost or timeline.

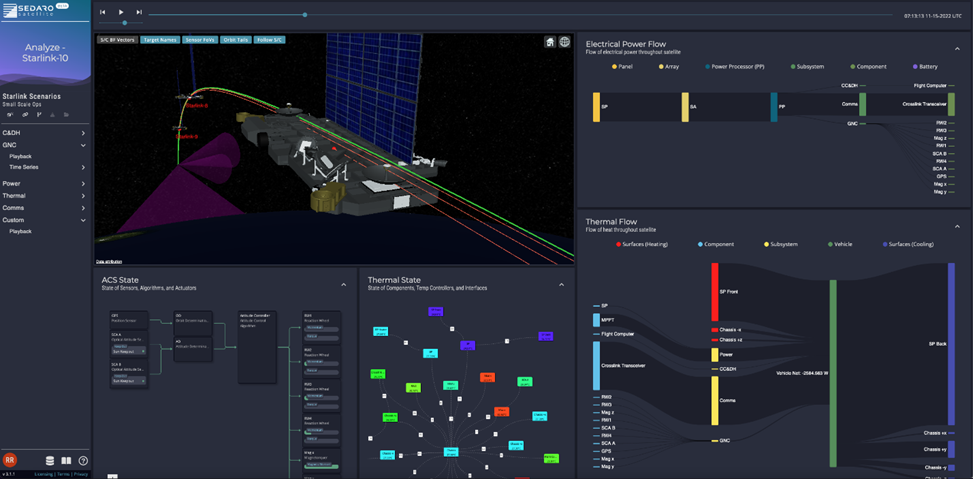

Sedaro is a digital twin software company focused on leveraging modern software frameworks and cloud computing to deliver the next step in the evolution of system simulation and virtualization tools. Sedaro is delivering this step forward in the space market with Sedaro Satellite, a SaaS tool that delivers:

- a scalable cloud simulation architecture for massive computing power,

- an automatically orchestrated software framework for efficient physics engine development,

- version and access control infrastructure for cross-cutting collaboration,

- OpenAPI compliant interfaces for turnkey interoperability, and

- distributed data streaming for immersive, human-in-the-loop simulation.

With Sedaro Satellite, engineers and operators can simulate their entire space architecture in an integrated, multiphysics simulation. This means not just simulating the outward dynamics of each spacecraft in a constellation but every subsystem and component on each spacecraft - including electrical power, temperatures, data flows, sensors, controllers, and propulsion systems. Sedaro would not be able to deliver Sedaro Satellite to customers quickly and at scale without cloud infrastructure. Sedaro is hosting its Sedaro Satellite 3.0 multi-tenant SaaS beta instance on Azure, along with a separate private cloud instance for a government customer. Sedaro Satellite leverages Azure Kubernetes Service (AKS), Application Gateway, Azure Blob Storage, and several Azure Database instances.

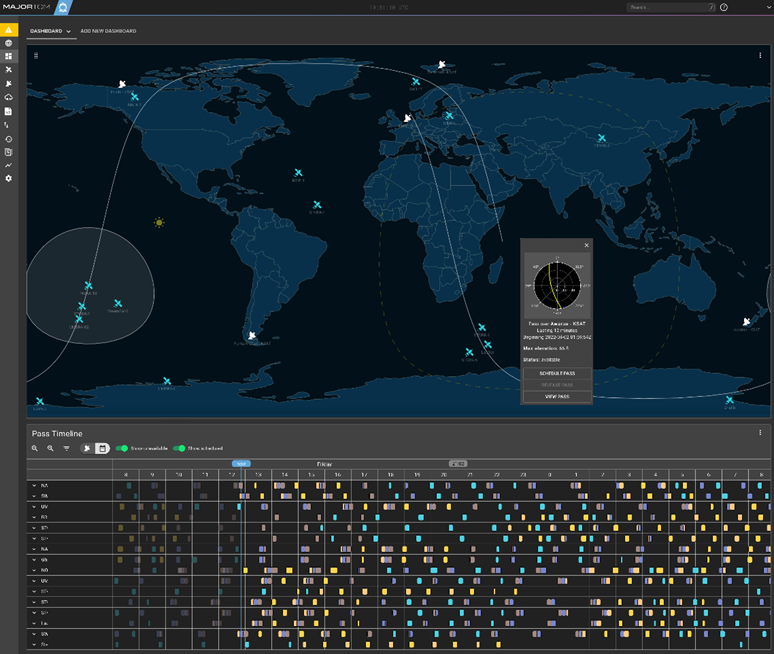

To build a scalable, flexible and reliable mission control application for satellite operators, Xplore’s Major Tom needed to be developed in the cloud from the start. Leveraging the cloud, Major Tom delivers the features modern satellite operators are looking for in a mission control. Through powerful APIs and innovative features, Major Tom allows operators to perform ground station scheduling, satellite tasking, and telemetry monitoring, all while saving money and time.

Major Tom utilizes the Azure Cloud in multiple regions to support customers with varying compliance requirements. The application is deployed into managed Azure Kubernetes Services (AKS) and supported by Azure Container Registry. Storage needs are simplified by using Azure Database for PostgreSQL, along with Disks and Snapshots -- all connected and secured by Azure's Virtual Network.

Another example of how the cloud can be used to support mission planning is the use of Digital Twins. The Azure IoT team developed a Digital Twin which pulls data from the International Space Station using this reference architecture and sample code. The result is demonstrated in this image:

One last example is a prototype from Esri. See the SatelliteXplorer here. Under the Search option, type in Aqua, for example, and you’ll zoom in on the satellite. The geo-visualization is based on Esri’s ArcGIS JavaScript API along with satellite.js.

In conclusion, Microsoft has a plethora of capabilities to aid spacecraft mission planning. Along with our partners and open-source tools, mission planning can be taken to the next level with cloud-native patterns, cloud native programming languages, tools, and culture. For more information, please feel free to contact the Azure Space team via email or make a comment below.

--

Contributions also from Robbie Robertson, PhD, CEO, Sedaro, and Tyler Browder, Business Development Director, Xplore, Inc.

Sources: