This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

As an Azure Sentinel user, you may have encountered the need to store and search your log data for extended periods of time. This could be due to regulatory requirements or simply as a means of maintaining a secure backup of your log data. In this blog, we'll examine the various options available for storing and searching Sentinel logs beyond the default 90-day retention period. We'll cover the main features of each option and provide guidance on how to implement them in your organization.

We'll explore the options for storing and searching Sentinel logs, including their capabilities and key selection criteria. Learn how to choose the best solution for your organization.

- Azure Data Explorer (ADX) - recommended for users who need to frequently query the data

- Retention and Archive Policies in Log Analytics Workspaces - recommended for users that want to query the data on occasion

- Exporting Data to an Azure Storage Account - recommended for users who rarely need to perform queries on the data or have specific querying needs

- Storage account export via Logic Apps - recommended for users who rarely need to perform queries on the data and have their storage account set in a different region than their log analytics workspace

Let's start.

-

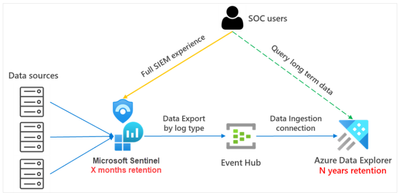

Azure Data Explorer (ADX)

Azure Data Explorer is an cost-effective option for storing Sentinel logs that allows you to retain the ability to query your data as it grows. If you need to occasionally access older data for investigations, Azure Data Explorer could be the ideal solution. Its flexible querying capabilities make it well-suited for users who need to run periodic investigations on their data

Azure Data Explorer (ADX) is a powerful big data analytics platform that is optimized for log and data analytics. It uses Kusto Query Language (KQL) as its query language, making it a good choice for storing Microsoft Sentinel data. When you export logs to ADX, they are automatically converted to compressed, partitioned Parquet format and can be easily queried. For more information on using ADX with Sentinel, check out the official documentation page and the ADX blog.

-

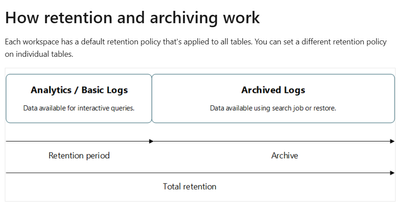

Retention and Archive Policies in Log Analytics Workspaces

Retention policies in a Log Analytics workspace determine when to remove or archive data. These policies are important for managing the cost of storing data in the workspace, as well as for ensuring that you have access to the data you need when you need it. Archiving allows you to keep older, less frequently used data in your workspace at a reduced cost. If you only need to query data occasionally, consider using the log analytics archive option.

During the interactive retention period, data is available for monitoring, troubleshooting, and analytics. When you no longer use the logs, but still need to keep the data for compliance or occasional investigation, archive the logs to save costs.

Archived data stays in the same table, alongside the data that's available for interactive queries. When you set a total retention period that's longer than the interactive retention period, Log Analytics automatically archives the relevant data immediately at the end of the retention period.

You can learn much more about retention and archive in the Official documentation pages

Querying Archived Data

When you archive data in a Log Analytics workspace, it stays in the same table as the data that's available for interactive queries. This means that you can still access and analyze the archived data, but at a lower cost. However, querying archived data can be done in different ways, depending on your use case.

Run queries directly on archived data

You can run queries directly on archived data within a specific time range on any Analytics table when the log queries you run on the source table can complete within the log query timeout of 10 minutes. However, querying Basic Logs will incur a charge per each query.

Restore option

The restore option makes a specific time range of data in a table available in the hot cache for high-performance queries. Use the restore option when you have a temporary need to run many queries on a large volume of data or when the log queries you run on the source table can't complete within the log query timeout of 10 minutes.

When you restore data, you specify the source table that contains the data you want to query and the name of the new destination table to be created. The restore operation creates the restore table and allocates additional compute resources for querying the restored data using high-performance queries that support full KQL. The destination table provides a view of the underlying source data, but doesn't affect it in any way. The table has no retention setting, and you must explicitly dismiss the restored data when you no longer need it. However, there are some limitations, for more information please look at the official documentation here.

Use a search job

When the log query timeout of 10 minutes isn't sufficient to search through large volumes of data or if you're running a slow query, you can use a search job. Search jobs also let you retrieve records from Archived Logs and Basic Logs tables into a new log table you can use for queries.

A search job sends its results to a new table in the same workspace as the source data. The results table is available as soon as the search job begins, but it may take time for results to begin to appear. The search job results table is an Analytics table that is available for log queries and other Azure Monitor features that use tables in a workspace. The table uses the retention value set for the workspace, but you can modify this value after the table is created. However, there are some limitations, for more information please look at the official documentation here.

Conclusion

In conclusion, there are several ways to query archived data in a Log Analytics workspace, depending on your use case and the amount of data you need to query. You can run queries directly on archived data, use the restore option for high-performance queries, or use a search job to retrieve records from archived tables. However, it is important to keep in mind the limitations of each method and consult the official documentation for more information.

-

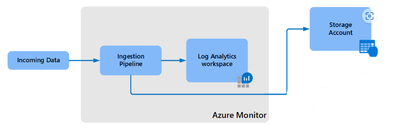

Exporting Data to an Azure Storage Account

If you need to store data for long-term retention and don't have specific query requirements, then exporting data to an Azure Storage account is a good option. Exporting data from Azure Monitor to an Azure Storage account enables low-cost retention and the ability to reallocate logs to different regions.

Data Export Rules

Data export in a Log Analytics workspace lets you continuously export data per selected tables in your workspace. You can export to an Azure Storage account of type StorageV1 or later, in the same region as your workspace. You can also replicate your data to other storage accounts in other regions by using any of the Azure Storage redundancy options, including GRS and GZRS.

After you've configured data export rules in a Log Analytics workspace, new data for tables in rules is exported from the Azure Monitor pipeline to your storage account as it arrives.

Creating an Export Rule

When you create an export rule, data is sent to storage accounts as it reaches Azure Monitor and exported to destinations located in a workspace region. A container is created for each table in the storage account with the name "am-" followed by the name of the table. For example, the table "SecurityEvent" would send to a container named "am-SecurityEvent".

You can also choose to shift the data to storage account, and define the storage account in advance as Cool storage, which will cost less than Hot storage. You can learn more about the tier options here. In the storage account, you have the capability to shift data between the tiers using lifecycle management. Azure Blob Storage lifecycle management offers a rich, rule-based policy which you can use to transition your data to the best access tier and to expire data at the end of its lifecycle. You can learn more on lifecycle management here

Please see more details on export to Storage account and limitations here

Querying the Data

You may need to query specific logs. Thanks to the KQL language, you will be able to query your exported logs in the Hot tier.

To support a look up from an external file/log, KQL offers the "exernaldata" operator. externaldata enables using files as if they were Azure Sentinel tables, allowing pre-processing of the file before performing the lookup, such as filtering and parsing.

Let's demonstrate how it can be done for AADManagedIdentitySignInLogs table.

The AADManagedIdentitySignInLogs was exported from Sentinel to Storage account via export rule:

Select the relevant log you want to query and copy the Blob SAS URL.

Open the Log Analytics workspace, go to the Logs tab and run the following query:

|

let AADManagedIdentitySignInLogs = externaldata (TimeGenerated:datetime, OperationName:string, Category:string, LocationDetails:string, ServicePrincipalName:string) [@"SAS TOKEN URL FOR BLOB"] with (format="multijson",recreate_schema=true); AADManagedIdentitySignInLogs |

-

Storage account export via Logic Apps

Azure Logic Apps is another option for storing and retrieving data from a Log Analytics workspace. It allows you to specify which data you want to retrieve from the Log Analytics workspace and send it to a storage account. This process can be set up to occur on a regular schedule.

Use Cases

Consider using this option if you need to:

Specify which data you want to archive

Send it to a storage account in a different region than your Log Analytics workspace

How it works

This procedure uses the Azure Monitor Logs connector, which lets you run a log query from a logic app and use its output in other actions in the workflow. The Azure Blob Storage connector is used to send the query output to storage. When you export data from a Log Analytics workspace, it's important to limit the amount of data processed by your Logic Apps workflow. Filtering and aggregating your log data in the query can help reduce the required data. By following this method you can archive your specific data, and it also allows you to send it to different regions.

When you export data from a Log Analytics workspace, limit the amount of data processed by your Logic Apps workflow. Filter and aggregate your log data in the query to reduce the required data.

Read more about this option here

Summary

In summary, Azure provides several options for storing and querying security logs for long-term retention, each with its own set of features and capabilities. Azure Data Explorer (ADX) is the most powerful option, with fast and scalable data exploration capabilities, real-time data ingestion, and advanced search capabilities. Azure Log Analytics is another good choice, offering a search engine for querying log data. Azure Storage accounts provide a low-cost storage option but have limited search capabilities. With Logic apps you can use to retrieve specific data from Log analytics and store it in azure storage accounts.