This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

As more and more industries are digitizing their operations, there is a need for simulation to enable these digital transformations. Simulation helps industries meet their business and operations goals by changing the environment variables and predicting the outcomes.

Azure Digital Twins (ADT) is a powerful way of simulating changes in the real world to reduce costs and operational overhead. For example, a manufacturing factory can have a representation in Azure Digital Twins, and customers can use the digital representation to observe its operations with the existing setup. However, if customers want to simulate changes and compare the cost of operation, quality of product, or time taken to build a product, they could use ADT to tweak their digital representations’ models, properties, and to observe the impact of these changes on the simulation.

Azure Digital Twins already supports APIs to create new models, twins, and relationships. But now, with the public preview release of the Jobs API, you can ingest large twin graphs into Azure Digital Twins with enriched logging and higher throughput. This in turn enables simulation scenarios, faster setup of new instances, and automate the model and import workflows for customers. It eliminates the need for multiple API requests to ingest a large twin graph, and the need for handling errors and retries across these multiple requests.

What’s new with the Jobs API?

- Quickly populate an Azure Digital Twins instance: Import twins and relationships at a much faster rate than our existing APIs. Typically, the Jobs API allows import of:

- 1M twins in about 10 mins, and 1M relationships in about 15 mins.

- 12M entities consisting of 4M twins and 8M relationships in 90 to 120 mins.

- 12M entities consisting of 1M twins and 11M relationships in 135 to 180 mins, where most twins have 10 relationships, and 20 twins have 50k relationships.

Note: The Jobs API for import today scales out for performance, based on the usage pattern of the customer. The numbers shown above take the time for this auto scale into account.

- Ingestion Limits: Import up to 2M twins and 10M relationships in one import job.

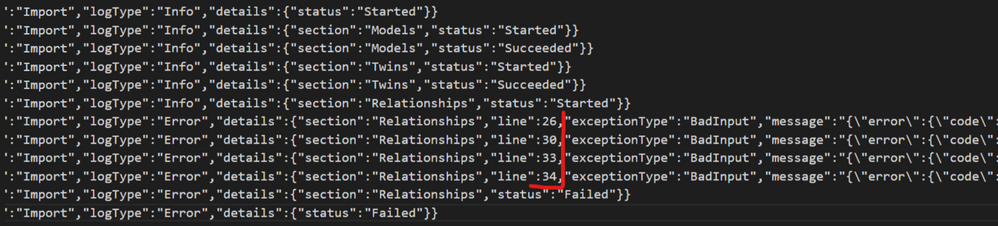

- Structured Output logs: The Jobs API produces structured and informative output logs indicating job state, progress, and more detailed error messages with line numbers.

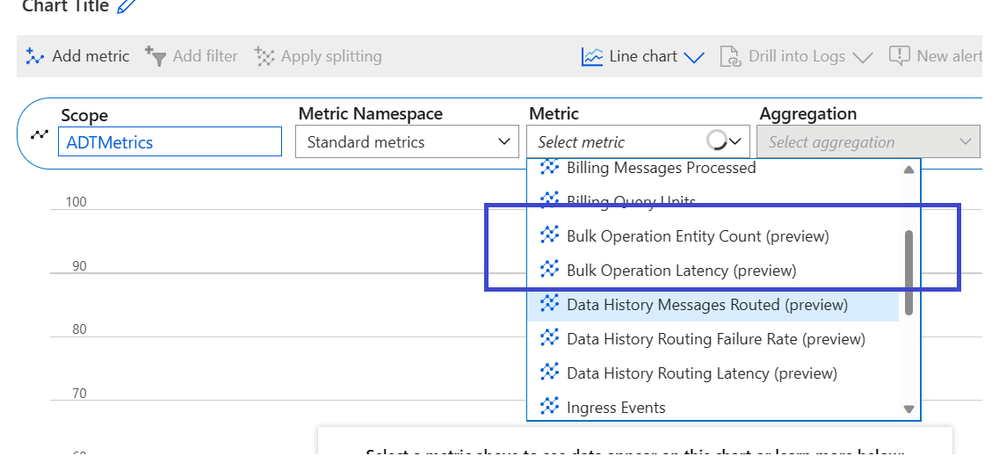

- Metrics: Additional metrics for your ADT instance indicating the number of entities ingested through import jobs are now available in the Azure portal.

- RBAC (role-based access control): The built-in role that provides all of these permissions is Azure Digital Twins Data Owner. You can also use a custom role to grant granular access to only the data types that you need.

- Same billing model for public preview: The billing model for the Jobs API matches the existing billing for models/twins APIs. The import of entities is equivalent to create operations in Azure Digital Twins.

Import Job Workflow

Here are the steps to execute an import job.

- The user creates a data file in the ndjson format containing models, twins, and relationships. We have a code sample that you can use to convert existing models, twins, and relationships into the ndjson format. This code is written for .NET and can be downloaded or adapted to help you create your own import files.

- The user copies this data file to an Azure Blob Storage container.

- The user specifies permissions for the input storage container and output storage container.

- The user creates an import job, specifying the storage location of the file (input), as well as a storage location for error and log information (output). User also provides the name of the output log file. The service will automatically create the output blob to store progress logs. There are two ways of scheduling and executing import of a twin graph using the Jobs API:

- Azure Digital Twins sends a new system event and changes the state of the job to Succeeded or Failed, based on how the job progressed.

- The user can review the output log information in the output folder for details on the job execution.

Important points to note

Please keep the following points in mind while using the Jobs API.

- Import is not an atomic operation, i.e., there is no roll back if partial import has been executed or the API execution has been canceled.

- You can cancel or delete the job itself, but you cannot update or delete twins using this API today.

- You can only run one job at a time per ADT instance.

Learn more

- Read more about using the Jobs API for bulk import operations in Azure Digital Twins APIs and SDKs - Azure Digital Twins | Microsoft Learn.

- Watch IoT Show to see Jobs API in action