This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

PgBadger is one of the most comprehensive Postgres troubleshooting tools available. It allows users to have insight into a wide variety of events happening in the database including:

- (Vacuums tab) Autovacuum actions - how many ANALYZE and VACUUM actions were triggered by autovacuum daemon including number of tuples and pages removed per table.

- (Temp Files tab) Distribution of temporary files and their sizes, queries that generated temporary files.

- (Locks tab) type of locks in the system, most frequent waiting queries, queries that waited the most; unfortunately, there is no information provided which query is holding the lock, only the queries that are locked are shown.

- (Top tab) Slow query log

- (Events tab) all the errors, fatals, warnings etc. aggregated.

- And many more

You can see a sample pgBadger report here.

You can generate a pgBadger report from Azure Database for PostgreSQL Flexible Server in multiple ways:

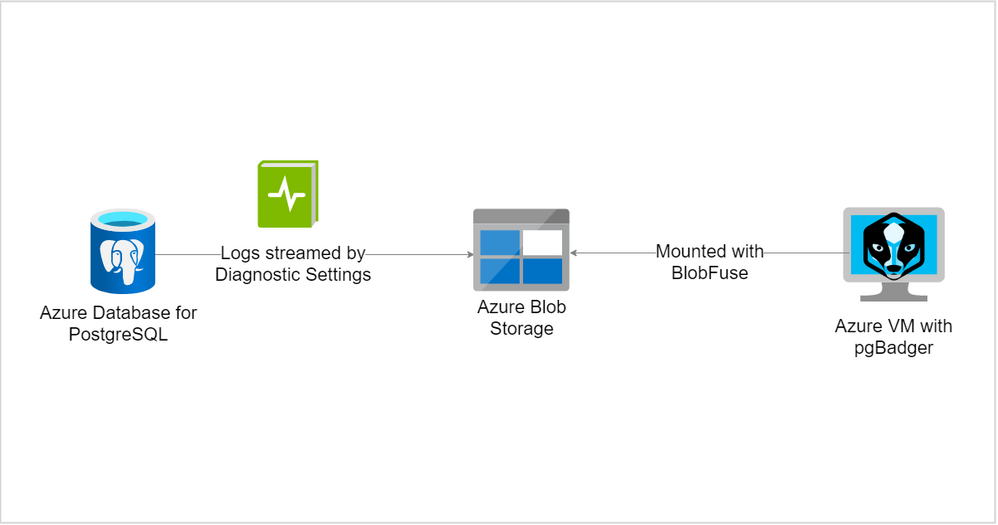

- Using Diagnostic Settings and redirecting logs to a storage account; mount storage account onto VM with BlobFuse.

- Using Diagnostic Settings and redirecting logs to a storage account; download the logs from storage account to the VM.

- Using Diagnostic Settings and redirecting logs to Log Analytics workspace.

- Using plain Server Logs*

*Coming soon!

In this article we will describe the first solution - Using Diagnostic Settings and redirecting logs to a storage account. At the end of exercise we will have storage account filled with the logs from Postgres Flexible Server and a operational VM with direct access to the logs stored in the storage account like shown below in the picture:

To be able to generate the report you need to configure the following items:

- Adjust Postgres Server configuration

- Create storage account (or use existing one)

- Create Linux VM (or use existing one)

- Configure Diagnostic Settings in Postgres Flexible Server and redirect logs to the storage account.

- Mount storage account onto VM using BlobFuse

- Install pgBadger on the VM

- Ready to generate reports!

Step 1 Adjust Postgres Server configuration

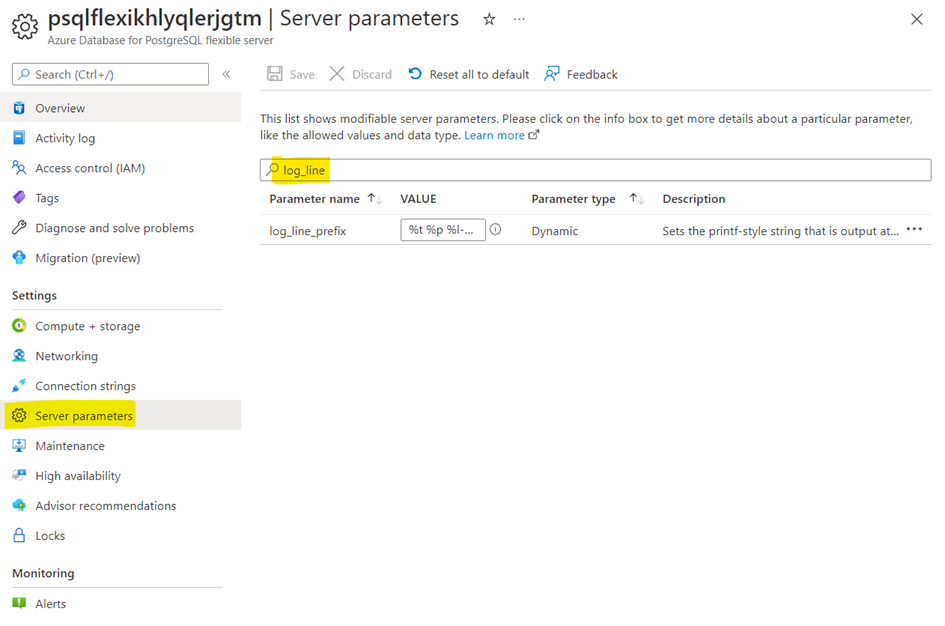

Navigate to the Server Parameters blade in the portal and modify the following parameters:

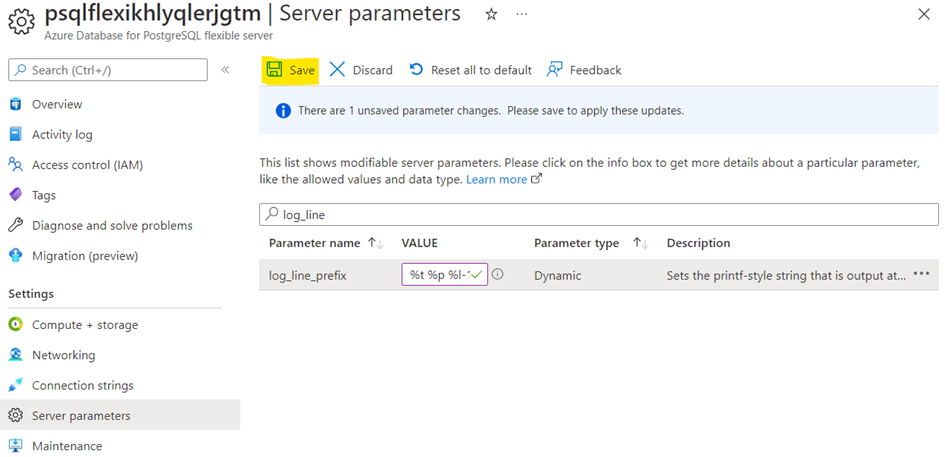

log_line_prefix = '%t %p %l-1 db-%d,user-%u,app-%a,client-%h ' #Please mind the space at the end!

log_lock_waits = on

log_temp_files = 0

log_autovacuum_min_duration = 0

log_min_duration_statement=0 # 0 is recommended only for test purposes, for production usage please consider much higher value, like 60000 (1 minute) to avoid excessive usage of resources

After the change hit the “Save”:

Step 2 Create storage account (or use existing one)

Please keep in mind that the storage account needs to be created in the same region as Azure Database for PostgreSQL Flexible Server. Please find the instruction here.

Step 3 Create Linux VM (or use existing one)

In this blog post we will use Ubuntu 20.04 as an example, but nothing stops you from using rpm-based system, the only difference will be in a way that BlobFuse and pgBadger is installed.

Step 4 Configure Diagnostic Settings in Postgres Flexible Server and redirect logs to the storage account.

Navigate to Diagnostic settings page in the Azure Portal, Azure Database for PostgreSQL Flexible Server instance and add a new diagnostic setting with storage account as a destination:

Hit save button.

Step 5 Mount storage account onto VM using BlobFuse

In this section you will mount storage account to your VM using BlobFuse. This way you will see the logs on the storage account as standard files in your VM. First let’s download and install necessary packages. Commands for Ubuntu 20.04 are as follows (feel free to simply copy and paste the following commands):

wget https://packages.microsoft.com/config/ubuntu/20.04/packages-microsoft-prod.deb

sudo dpkg -i packages-microsoft-prod.deb

sudo apt-get update -y

For other distributions please follow the official documentation.

Use a ramdisk for the temporary path (Optional Step)

The following example creates a ramdisk of 16 GB and a directory for BlobFuse. Choose the size based on your needs. This ramdisk allows BlobFuse to open files up to 16 GB in size.

sudo mkdir /mnt/ramdisk

sudo mount -t tmpfs -o size=16g tmpfs /mnt/ramdisk

sudo mkdir /mnt/ramdisk/blobfusetmp

sudo chown <your VM admin> /mnt/ramdisk/blobfusetmpAuthorize access to your storage account

You can authorize access to your storage account by using the account access key, a shared access signature, a managed identity, or a service principal. Authorization information can be provided on the command line, in a config file, or in environment variables. For details, see Valid authentication setups in the BlobFuse readme.

For example, suppose you are authorizing with the account access keys and storing them in a config file. The config file should have the following format:

accountName myaccount

accountKey storageaccesskey

containerName insights-logs-postgresqllogs

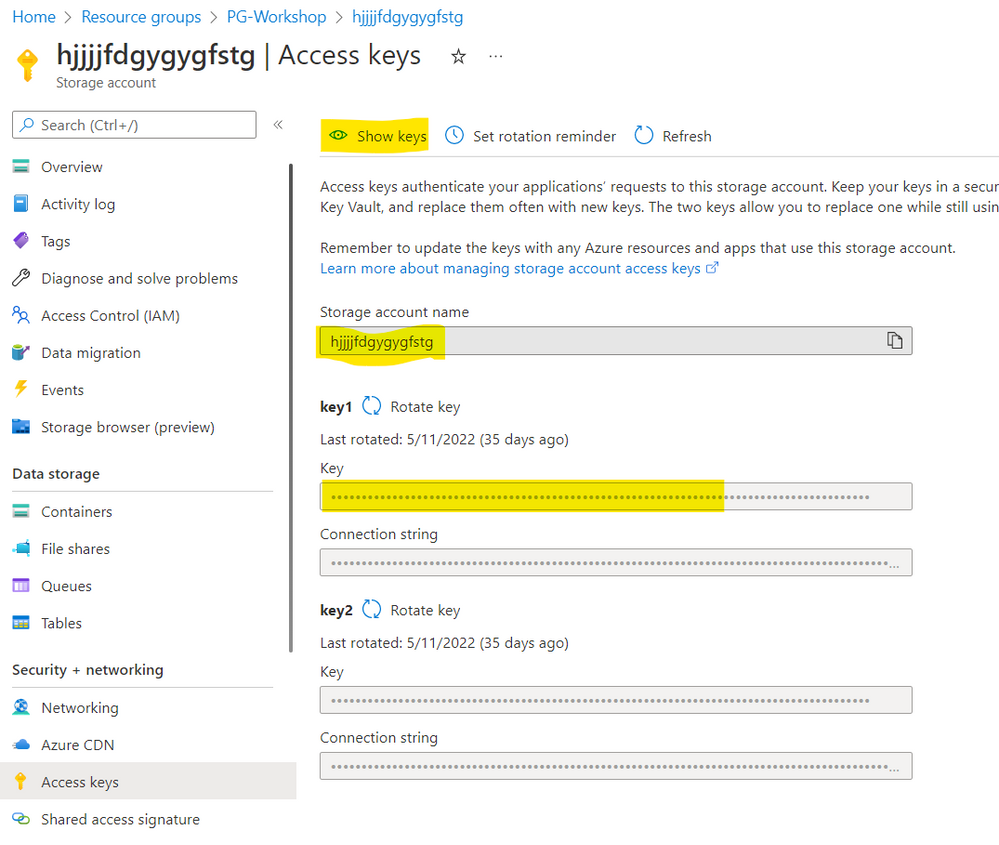

Please prepare the following file in editor of your choice. Values for the accountName and accountKey you will find in the Azure Portal and the container name is the same as in the example above. The accountName is the name of your storage account, and not the full URL.

Please navigate to your storage account in the portal and then choose Access keys page:

Copy accountName and accountKey and paste it to the file. Copy the content of your file and paste it to the fuse_connection.cfg file in your home directory, then mount your storage account container onto the directory in your VM:

vi fuse_connection.cfg

chmod 600 fuse_connection.cfg

mkdir ~/mycontainer

sudo blobfuse ~/mycontainer --tmp-path=/mnt/resource/blobfusetmp --config-file=/home/<your VM admin>/fuse_connection.cfg -o attr_timeout=240 -o entry_timeout=240 -o negative_timeout=120

sudo -i

cd /home/<your VM admin>/mycontainer/

ls # check if you see container mounted

# Please use tab key for directory autocompletion; do not copy and paste!

cd resourceId\=/SUBSCRIPTIONS/<your subscription id>/RESOURCEGROUPS/PG-WORKSHOP/PROVIDERS/MICROSOFT.DBFORPOSTGRESQL/FLEXIBLESERVERS/PSQLFLEXIKHLYQLERJGTM/y\=2022/m\=06/d\=16/h\=09/m\=00/

head PT1H.json # to check if file is not emptyAt this point you should be able to see some logs being generated.

Step 6 Install pgBadger on the VM

Now we need to install pgBadger tool on the VM. For Ubuntu please simply use the command below:

sudo apt-get install -y pgbadgerFor other distributions please follow the official documentation.

Step 7 Ready to generate reports!

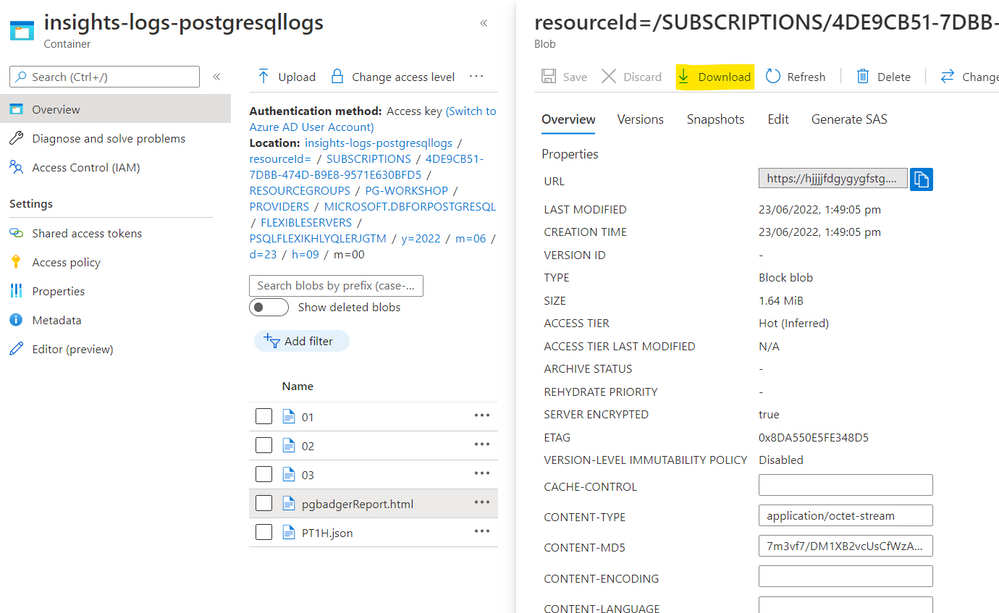

Choose the file you want to generate pgBadger from and go to the directory where the chosen PT1H.json file is stored, for instance, to generate a report from 2022-05-23, 9 o'clock you need to go to the following directory:

cd /home/pgadmin/mycontainer/resourceId=/SUBSCRIPTIONS/***/RESOURCEGROUPS/PG-WORKSHOP/PROVIDERS/MICROSOFT.DBFORPOSTGRESQL/FLEXIBLESERVERS/PSQLFLEXIKHLYQLERJGTM/y=2022/m=05/d=23/h=09/m=00

Since PT1H.json file is a json file and the Postgres log lines are stored in the message and statement values of the json we need to extract the logs first. The most convenient tool for the job is jq which you can install using the following command on Ubuntu:

sudo apt-get install jq -y

Once jq is installed we need to extract Postgres log from json file and save it in another file (PTH1.log in this example):

jq -r '.properties | .message + .statement' PT1H.json > PT1H.log

Finally we are ready to generate pgBadger report:

pgbadger --prefix='%t %p %l-1 db-%d,user-%u,app-%a,client-%h ' PT1H.log -o pgbadgerReport.html

Now you can download your report either from Azure Portal - your storage account or by using scp command:

Happy Troubleshooting!