This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Abstract:

This blog explores the concept of tokens and their significance in GPT models. It delves into the process of tokenization, highlighting techniques for efficient text input. The blog also addresses the challenges associated with token usage and provides insights into optimizing token utilization to improve processing time and cost efficiency. Through examples and practical considerations, readers will gain a comprehensive understanding of how to harness the full potential of tokens in GPT models. Also introduced a newly developed tool “Token Metric & Code Optimizer” for Token optimization with example codes.

Introduction:

Tokens are the fundamental units of text that GPT models use to process and generate language. They can represent individual characters, words, or subwords depending on the specific tokenization approach. By breaking down text into tokens, GPT models can effectively analyze and generate coherent and contextually appropriate responses.

GPT Model & Token Limit:

Character-Level Tokens:

In character-level tokenization, each individual character becomes a token. For example, the sentence "Hello, world!" would be tokenized into the following tokens: ['H', 'e', 'l', 'l', 'o', ',', ' ', 'w', 'o', 'r', 'l', 'd', '!']. Here, each character is treated as a separate token, resulting in a token sequence of length 13.

Word-Level Tokens:

In word-level tokenization, each word in the text becomes a token. For instance, the sentence "I love to eat pizza" would be tokenized into the following tokens: ['I', 'love', 'to', 'eat', 'pizza']. In this case, each word is considered a separate token, resulting in a token sequence of length 5.

Tokenization Techniques:

To balance the trade-off between granularity and vocabulary size, various tokenization techniques are employed. One popular technique is subword tokenization, which involves breaking words into subword units. Methods like Byte Pair Encoding (BPE) or SentencePiece are commonly used for subword tokenization. This approach enables GPT models to handle out-of-vocabulary (OOV) words and reduces the overall vocabulary size. Representing words as subword units allows the model to efficiently handle a larger variety of words.

Cost of Tokens:

While tokens are essential for text processing, they come with a cost. Each token requires memory allocation and computational operations, making tokenization a resource-intensive task. Moreover, pre-training GPT models involves significant computation and storage requirements. Therefore, optimizing token usage becomes essential to minimize costs and improve overall efficiency.

Optimizing Token Usage:

Optimizing token usage plays a vital role in reducing the costs associated with tokenization. One aspect of optimization involves minimizing the number of tokens used in the input text. Techniques like truncation and intelligent splitting can be employed to achieve this goal. Truncation involves discarding the excess text beyond the model's maximum token limit, sacrificing some context in the process. On the other hand, splitting involves dividing long texts into smaller segments that fit within the token limit. This approach allows for processing the text in parts while maintaining context and coherence.

For example, suppose we have a long document that exceeds the token limit. Instead of discarding the entire document, we can split it into smaller chunks, process each chunk separately, and then combine the outputs. By optimizing token usage through intelligent splitting, we ensure efficient processing while preserving the context of the document.

Now let’s talk about how we can optimize the text for the optimal usage of Token for cost and the processing time. Next, we will discuss customized tool build for this purpose Token Metric & Code Optimizer:

Token Metric & Code Optimizer:

Token Optimizer:

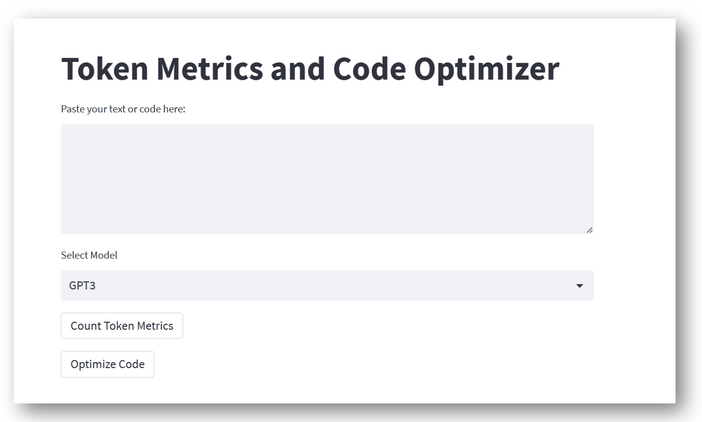

The Token Optimizer tool I have developed offers users the ability to test the number of tokens required for a given text input. This feature enables users to determine the total token count, character count, and word count. By providing these metrics, users can gain insights into the composition of their text and understand the token requirements.

Additionally, the Text Optimizer module within the tool helps eliminate extra spaces and optimize the text, reducing the unnecessary token usage. This optimization process ensures that the text is streamlined and efficiently utilizes tokens. Once the optimization is complete, users can download the optimized file for further processing.

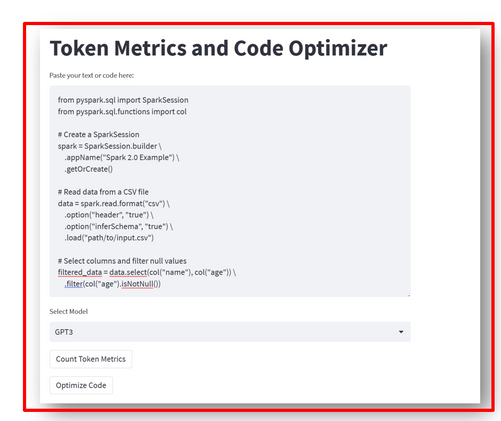

Code Optimizer

Similarly, the Code Optimizer module optimizes input code by analyzing its token count, character count, and word count. By optimizing the code, users can enhance its efficiency and readability. The tool also enables users to download the optimized code file for seamless integration into their development workflow.

Overall, the Token Optimizer provides a comprehensive solution for optimizing both text and code inputs. It empowers users to understand the token requirements, eliminate unnecessary tokens, and obtain optimized files for further processing.

Non-Optimized Text: this has spaces and special characters.

Now Count Token and Download the Optimized Text

Optimized Text Download:

Code Optimizer:

Code Metric and Download Optimized Code

Installation Guide :

- Install Python

- Install Streamlit – pip install streamlit

- Install Black -pip install black

- To hide the OpenAI access key please follow my previous blog :

How to Secure Azure OpenAI Keys Using Environment Variables, Azure Vault, and Streamlit Secrets

Code In Git:

Token Optimization -Tool

Conclusion:

Efficient tokenization and optimization play a crucial role in reducing costs associated with GPT models. By employing techniques like subword tokenization, intelligent splitting, and truncation, organizations can minimize the number of tokens, leading to significant cost savings in infrastructure, computational resources, and processing time. As organizations leverage GPT models for various text processing tasks, understanding and implementing token optimization strategies becomes essential to achieve cost-effective and efficient operations.