This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

Imagine the scenario when you, as a SaaS provider, have to reliably upload a massive amount of content into the cloud (for the simplicity of our example, we would refer to these files as JSON files). This SaaS application has to upload millions of files, from hundreds of thousands of users, within a few minutes.

The mission-criticality comes from the fact that the user-generated content is very important and must be reliably uploaded, i.e. failure to upload is not an option.

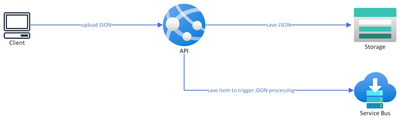

A typical architecture would have a load-balanced API that receives, processes and uploads the file into Azure Blob Storage, and maybe sends a notification about the newly uploaded document into the queue for further processing.

However, there is an alternative approach that can improve resiliency and throughput, and save costs.

This article will cover the list of steps needed to achieve this alternative approach:

- Step 1 (resiliency): multiple storage accounts

- Step 2 (resiliency): upload directly into storage accounts without processing JSONs on API

- Step 3: Introducing Azure Front Door

- Conclusion

Step 1 towards resiliency: multiple storage accounts

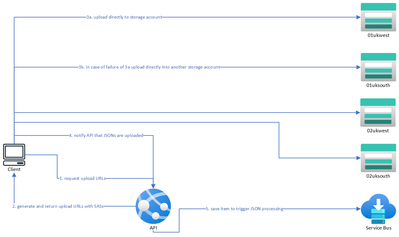

Rather than relying on a single storage account, consider creating multiple storage accounts distributed across two or more Azure regions. In this case, for example, we will have a total of four storage accounts: two in the UK West region (01ukwest & 02ukwest) and two in the UK South region (01uksouth and 02uksouth). Storage accounts are named after the underlying regions for simplicity.

With this architecture, the API receives the JSON file and picks the storage account for storing JSON files.

Step 2 towards resiliency: upload directly into storage accounts without processing JSONs on API

The next step for improving the resilience is to avoid processing the JSON files at the API level but write them directly into the storage account. With this approach, it is the client application uploading files directly into Azure storage.

In this new approach, the client app will call a web-based API and retrieve a list of multiple upload locations. For each JSON file that the client uploads, the backend system generates a list of ten possible upload locations, with one in each of the existing storage accounts. Each URL contains a Shared Access Signature, ensuring that the URL can only be used to upload to the designated blob URL.

The client will have to attempt to upload the JSON via one of the provided URLs and if this request fails, the client app will have to try to upload to an alternative location.

This approach improves performance and helps to achieve load distribution/sharding by having different clients use storage accounts in different orders. It is the API's responsibility to make sure that the files are distributed evenly between storage accounts, so you as a SaaS provider will have to define logic to prioritize required storage accounts which should be used by client applications by default.

By having the client app directly upload JSON files into blob storage, we make sure that the compute resource (the API layer handling JSON uploads from the client) is not the bottleneck in terms of performance, as well as we bring down the costs of the overall solution since now API is not spending compute time on uploading the files.

However, this approach presents a new challenge: customers will need to whitelist all the URLs for the storage accounts, and the addition of new endpoints lacks a dynamic way of adding new storage account URLs into the whitelisting.

Step 3: introduce Azure Front Door

This problem can be solved with Azure Frontdoor routing for Azure Blob Storage.

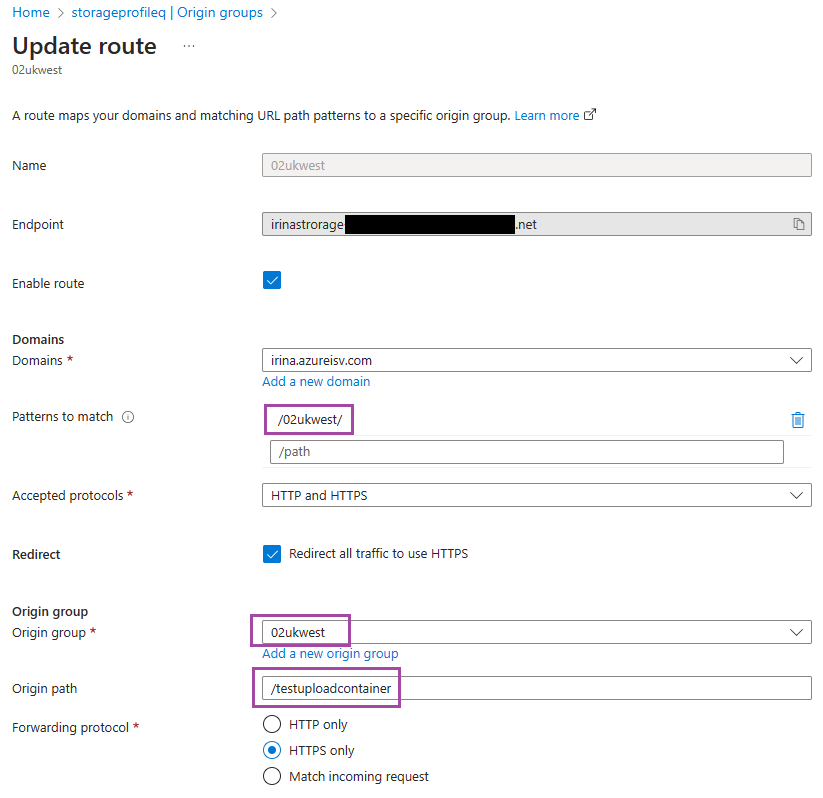

With this solution, requests such as "https://irina.azureisv.com/01ukwest/<blob_to_be_uploaded>" will be directed to the corresponding underlying storage account, i.e., "https://01ukwest.blob.core.windows.net/testuploadcontainer/<blob_to_be_uploaded>" It is crucial to note that, in this case, the name of the container remains unknown to the client application and is enclosed within the configuration of Azure Front Door.

Azure Frontdoor configuration for Azure Storage accounts

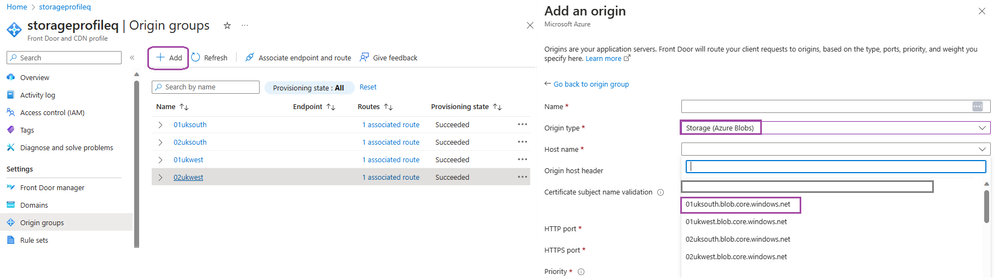

Below is an example configuration for Azure Frontdoor. First, create a new Origin and Origin group:

In the origin configuration, make sure to specify the origin type as a blob storage account and select the appropriate storage account available within your subscription.

When configuring the Origin group route, you have to specify a path that will be processed for this origin group (in our case everything under /02ukwest) and make sure to select the newly created origin group and specify the path to the container inside the storage account.

Finally, you need to create a new Rule set configuration:

*Preserve unmatched path allows you to append the remaining path after the source pattern to the new path.

Conclusion

By implementing Azure Front Door routing for Azure Blob Storage, you can streamline your file-uploading process. With this solution, the complexities of managing multiple storage accounts are simplified. This approach enables smoother integration and ensures a seamless experience for your customers, making it an indispensable strategy for managing large-scale data operations effortlessly in the digital realm. In addition, by using Azure Front Door you are benefiting from security features, such as DDoS protection (the default Azure infrastructure DDoS protection which monitors and mitigates network layer attacks in real-time by using the global scale and capacity of Front Door’s network), as well as Web Application Firewall (WAF) which defends your web services against common exploits and vulnerabilities.