This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Tech Community.

When writing data to Azure SQL DB as part of your Data Factory ETL job using data flows, there are a number of features available to you that can handle common constraints found in target tables including identity inserts (use sink scripts) , handling known constraints in your data flow logic, and the latest feature to trap, log, and continue on row-level errors in SQL DB.

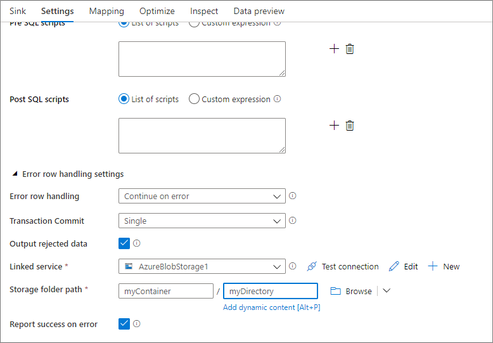

In your ADF Data Flow SQL DB Sink, you will see an option at the bottom for "Error row handling". The default is the current behavior in ADF, which tells ADF to fail fast as soon as a target table constraint is encountered on the target table.

You can now optionally tell ADF to "Continue on Error" so that the ETL process will continue writing rows to the SQL DB sink even after error rows have been encountered. ADF does this through a 2-stage process which means that there is a small performance penalty incurred by choosing this option.

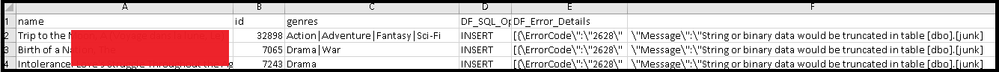

However, once you've decided to pass over error rows and continue writing using the Sink setting, you can now also tell ADF to automatically log those errors along with the error conditions and original data. This will allow you to view the error details as well as to have the opportunity to re-process those original rows, processing only the errored rows.

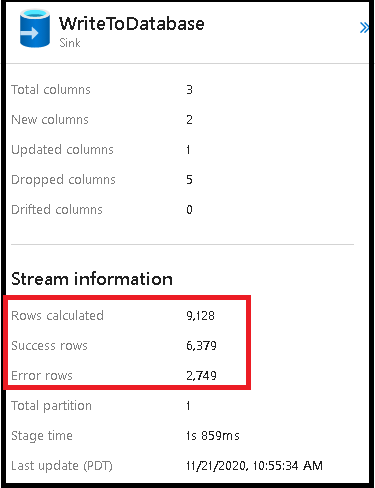

Once you've chosen to "continue on error", you can then choose the return code status of the activity by setting "Report success on error". When true, ADF will return a success code for your data flow even when rows errored. Optionally, set it to false to return a fail status. You will then see the results of the number of success vs. failed rows in the Sink details in the data flow activity monitoring view.