This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

Storage Event Trigger in Azure Data Factory is the building block to build an event driven ETL/ELT architecture (EDA). Data Factory's native integration with Azure Event Grid let you trigger processing pipeline based upon certain events. Currently, Storage Event Triggers support events with Azure Data Lake Storage Gen2 and General-Purpose version 2 storage accounts, including Blob Created and Blob Deleted.

Event-driven architecture (EDA) is a common data integration pattern that involves production, detection, consumption, and reaction to events. Data integration scenarios often require customers to trigger pipelines based on events happening in storage account, such as the arrival or deletion of a file in Azure Blob Storage account. Data Factory and Synapse pipelines natively integrate with Azure Event Grid, which lets you trigger pipelines on such events.

The below document and blogs talk about how you can create ADF event trigger that run ADF pipeline in response to Azure Storage events.

Create event-based triggers - Azure Data Factory & Azure Synapse | Microsoft Learn

Storage Event Trigger - Permission and RBAC setting - Microsoft Community Hub

While the basic architecture and settings remains the same, in this blog we will be mainly focus on SFTP related storage events and the configuration changes that you make currently to trigger an ADF pipeline.

Now, as mentioned that the basic steps remain the same when it comes to the creation of trigger. We need to provide the details such as storage account name (SFTP enabled one), container name along with the pattern (blob start/blob end) to match the triggering conditions.

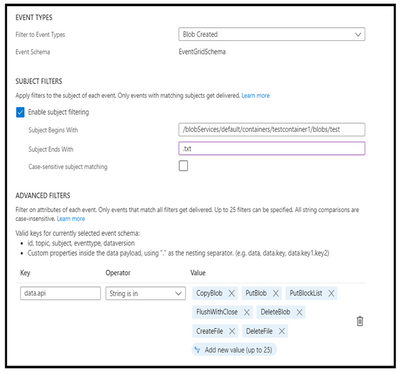

Once you perform the above step, it automatically creates Event grid configurations automatically at the backend. Below is how the configuration will look based on the filtering patten that you had selected while creating the trigger.

If we look at the data API’s that gets added, currently, we will see mainly the Blob Storage REST API’s and the Data Lake gen2 REST API’s. However, for SFTP storage, there are different set of REST API’s such as SFTPCreate, SFTPCommit, SFTPRename etc. You can monitor the REST APIs via diagnostic logging as well:

Monitoring Azure Blob Storage | Microsoft Learn

Based on these API’s the corresponding SFTP events are generated as discussed in the below link:

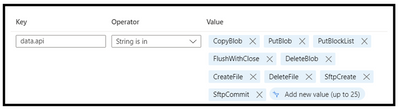

Now with the default configuration that gets added to the filtering section, if we try to perform operations via SFTP REST API’s, although the event gets generated, the event will tend to get dropped as based on the filtering conditions, it will not find the corresponding data API. As a result, the trigger won’t execute pipeline ahead. Hence, we need to add the SFTP specific REST API’s to data.api section to match the triggering conditions of the event such as below:

Once this has been added and you try to upload a blob via SFTP REST API’s, it will make the ADF pipeline to trigger ahead successfully.

Hope this helps!