This post has been republished via RSS; it originally appeared at: Microsoft Tech Community - Latest Blogs - .

We are happy to announce the public preview of log compaction feature in Azure Event Hubs. With log compaction, you can create compacted event hubs or compacted Kafka topics in Event Hubs and retain the latest events using key-based retention.

What is Log Compaction?

Log compaction is a way of retaining data in Event Hubs using event key. By default, each event hub/Kafka topic is created with time-based retention or delete cleanup policy, where events are purged upon the expiration of the retention time. Rather using coarser-grained time based retention, you can use event key-based retention mechanism where Event Hubs retrains the last known value for each event key of an event hub or a Kafka topic.

Log compaction can be useful in scenarios where you stream the same set of updatable events. As compacted event hubs only keep the latest events, users don't need to worry about the growth of the event storage. Therefore, log compaction is commonly used in scenarios such as Change Data Capture (CDC), maintaining event in tables for stream processing applications and event caching.

As illustrated below, an event log (of an event hub partition) may have multiple events with the same key. If you're using a compacted event hub, then Event Hubs service will take care of purging old events and only keeping the latest events of a given event key. Therefore, in the following example, the old events related to keys K1 and K4 are discarded.

Client application can also mark existing events of an event hub to be deleted during compaction job. These markers are known as Tombstones. They are set by the client applications by sending a new event with an existing key and a null event payload. In the previous example, we have set a tombstone for key K5 and once the compaction is completed event corresponding to that key is removed from the event log.

How log compaction works

You can enable log compaction at each event hub/Kafka topic level, and you can ingest events to a compacted article from any supported protocol. Azure Event Hubs service runs a compaction job for each compact event hub. The compaction job cleans each event hub partition log by only retaining the latest event of a given event key.

At a given time, the event log of a compact event hub can have a cleaned portion and dirty portion. The clean portion of the log contains the events that are compacted by the compaction job while the dirty portion comprises the events that are yet to be compacted.

The execution of the compaction job is managed by the Event Hubs service and user can't control it directly. Compaction job is triggered when the size of the dirty portion of the log reaches a certain threshold.

Log compaction for Apache Kafka workloads

Enabling log compaction for Apache Kafka workloads is quite straightforward. You just need to create an event hub/Kafka topic with compaction option enabled and publish events with the preferred partition key which will be used as the compaction key.

You don't have do any code changes of your existing Kafka application that use compaction.

Creating a compacted Kafka topic

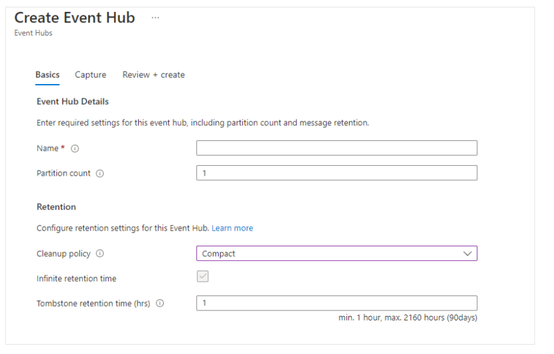

You can create a compacted event hub/Kafka topic inside an Event Hubs namespace, by specifying the cleanup policy as ‘Compact’.

Then you can publish or consume data from that topic as you would do with any other topic.

Producing data to a compacted topic

Producing events to a compacted event hub is the same as publishing events to a regular event hub. As part of the client application you only need to determine the compaction key, which you set using partition key.

In the following Kafka producer application we send a series of events(from keys 1 to 100) followed by a set of updated events for the same keys. To trigger compaction job quickly, we use a relatively large event payload size( ~1MB). As the event value we use 'V1' prefix for the first event set.

byte[] data = new byte[oneMb - kafkaSerializationOverhead];

Arrays.fill(data, (byte)'a');

String largeDummyValue = new String(data, StandardCharsets.UTF_8);

for(int i = 0; i <= 100; i++) {

System.out.println("Publishing event: Key-" + i );

final ProducerRecord<String, String> record = new ProducerRecord<String, String>(TOPIC,

"Key-" + Integer.toString(i),

"V1_" + Integer.toString(i) + largeDummyValue);

producer.send(record, new Callback() {

public void onCompletion(RecordMetadata metadata, Exception exception) {

if (exception != null) {

System.out.println(exception);

System.exit(1);

}

}

});

}

Then we are sending updated events for a subset(from keys 1 to 50) of event keys that we previously published. As the event value we use 'V2' prefix for the second event set.

for(int i = 0; i <= 50; i++) {

System.out.println("Publishing updated event: Key-" + i );

final ProducerRecord<String, String> record = new ProducerRecord<String, String>(TOPIC,

"Key-" + Integer.toString(i),

"V2_" + Integer.toString(i) + largeDummyValue);

producer.send(record, new Callback() {

public void onCompletion(RecordMetadata metadata, Exception exception) {

if (exception != null) {

System.out.println(exception);

System.exit(1);

}

}

});

}

So, once the compaction job fully compacts the topic, you should not see the duplicate events for the updated events(from keys 1 to 50).

Consuming data from a compacted topic

There are no changes required at the consumer side to consume events from a compacted event hub. So, you can use any of the existing consumer applications to consume data from a compacted event hub. With this example, once the compaction is done, you will only see the latest events with 'V2' prefix from keys 1-50 and events with 'V1' prefix for other events from 51-100.

Mirror Maker 2 compatibility

As Azure Event Hubs fully support Kafka compaction, you can use Mirror Maker 2 to replicate data between an existing Kafka cluster and an Event Hubs namespace or between Event Hubs namespaces.

Next Steps

To learn more about log compaction in Azure Event Hubs, please refer following documention.