This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

In this blog post, you will learn how one could benefit from OpenAI’s chat plugin ecosystem to enhance the capabilities of the state-of-the-art chat completion models when tackling a real-world Postgres and Citus use case: choosing the right shard key (also known as the distribution column).

If you are already using the Citus database extension in Postgres, or Azure Cosmos DB for PostgreSQL, you probably know that shard keys are used in distributed tables to assign table rows to shards.

Choosing the right distribution strategy for your Citus database—such as choosing the right shard key in your PostgreSQL distributed tables, or choosing whether a table should be picked as a reference table—is one of the most important data modeling decisions (and, pain points). Even though you have access to tips for selecting a Citus sharding key for the most common distributed PostgreSQL scenarios, you may still need use-case- or workload-specific help.

In the following sections, you will learn more about:

- LLMs & Chat Completion Models—and Limitations

- 3 Strategies for Making GPT Context Aware

- Exploring How a ChatpGPT Plugin Could Help Select a Citus Shard Key

- Extending the Framework to Other Use Cases

LLMs and Chat Completion Models and Their Limitations

Chat completion models, such as GPT-3 and its successors GPT-3.5 and GPT-4, are a sub-class of Large Language Models (LLMs) and have revolutionized the field of natural language processing. These models are trained on vast amounts of textual data, which enables the models to understand and generate not only natural language but also code based on the input they receive.

These models can be used in a wide range of applications… from chatbots and virtual assistants to content creation and language translation (you can read more about our customer stories). The models’ underlying technology, i.e., transformers, provide complex mechanisms for the models to understand and generate text based on context.

Despite their impressive capabilities, chat completion models are not without their limitations:

- Generalist models. Because the models are trained on a diverse range of data, they may not always provide the most accurate or context-specific responses.

- Information cutoff. At the time of writing this post, the information cutoff date for the latest GPT deployments is September 2021, which means that any events, documentation, or knowledge would not be included in their knowledge base (except for the newly arriving web-browsing capabilities through the plugin system).

- Limited context length. Even the most capable model, GPT-4, comes with two variants: (1) the vanilla version with 8,192 tokens, and (2) the 32k version with 32,768 tokens. With the rule of thumb of 1 token being roughly equal to 0.75 English words, these models are limited to 6,000 and 24,000 words of interactions (including both the user’s input and the model’s response), respectively. This practically means that, beyond the given thresholds, the models lack the ability to remember past interactions or details.

3 Strategies for Making GPT Context Aware: Prompt Design, Fine-tuning, and ChatGPT Plugins

Addressing the limitations of chat completion models—in order to make GPT context aware—can be achieved through a combination of strategies: prompt design, finetuning, and ChatGPT plugins.

Prompt Design. Carefully crafting prompts may be the first approach to guiding the model to produce more accurate and contextually relevant responses (i.e., making the model a specialist). Because the chat completion models are trained to follow/extract patterns in natural language prompts, given context, you can (and should) design your prompts to tell the model what you expect (as patterns) in its output. Usually, this strategy includes:

- showing and telling the models what you expect,

- providing high-quality data from the context you are using the model for, and,

- playing with the model’s settings that affect the randomness of the model’s responses.

Here, you should remember that showing and telling what you expect, as well as providing data, in you prompts do count against the context length limitations of the models.

Fine-Tuning. Fine-tuning is a process where (parts of) the models are further trained on a specific dataset after their initial training. This process is specifically introduced to overcome the limitations of prompt design, i.e., to have the ability to train the models on more examples than can fit in prompts. As a result, fine-tuning results in higher-quality results and token savings when using the models in a particular domain. That said, at the time of writing this post, fine-tuning is only available for some select base models such as, e.g., davinci, curie, babbage and ada (refer to OpenAI documentation on fine-tuning for more up-to-date information).

ChatGPT Plugins. OpenAI plugins connect ChatGPT to third-party applications, enhancing the model’s capabilities and allowing it to:

- retrieve real-time information (e.g., cluster information),

- retrieve domain-specific knowledge (e.g., product documentation past the model’s information cutoff date), and,

- assist users with different actions (e.g., managing clusters).

By leveraging the three strategies above—prompt design, fine-tuning, and ChatGPT plugins—you can significantly enhance the performance and versatility of chat completion models. You can learn more about the use of the ChatGPT plugin ecosystem and specifically more about retrieval plugins for Azure Database for PostgreSQL in some new blog posts. For now, let us continue with how you can leverage prompt design and real-time information injection (via the plugin system) in a real-world use case.

Real-World Example: Exploring How a ChatpGPT Plugin Could Help Select a Citus Shard Key for a Distributed Postgres Cluster

If you have visited the Citus open source documentation on data modeling, you already know that if the shard keys in distributed tables are chosen correctly, related tables will be co-located on the same physical node, which makes queries faster and provides support for all SQL features. Otherwise, the system will run needlessly slow and will not be able to support all SQL features across cluster nodes.

To investigate whether you can make GPT a shard-key selection specialist by carefully crafting prompts and feeding the chat completion model with curated, real-time data, let us consider the two most common Citus workloads:

- online analytical processing, using the GitHub Events dataset; down sampled

- online transaction processing, using the TPC-C/HammerDB benchmark dataset

Short Snippet from Potential Conversation with GPT-4

Before doing anything else, let us try to understand what GPT-4 can say about our problem. Below, you see a short snippet from a potential conversation between a user and GPT-4:

User:

Hi GPT. Please tell me (1) what you know about shard key selection in Citus (e.g., important aspects in picking the right distribution strategy), and (2) your cutoff date for this information. In your response, please list the aspects briefly... no more than 1-2 sentences for each aspect.

GPT:

Sure, here are some key aspects to consider when selecting a shard key in Citus:

- Data Distribution: Choose a shard key that distributes data evenly across all nodes to avoid data skew and hotspots.

- Query Patterns: The shard key should align with your query patterns. If you often filter or join on a particular column, it might be a good candidate.

- Write Operations: If your application has a high volume of write operations, consider a shard key that distributes these writes across multiple nodes to increase throughput.

- Data Co-location: If you frequently perform joins between different tables, it can be beneficial to distribute the tables on the same shard key to enable local joins.

- Tenant Isolation: If your application is multi-tenant, you might want to choose a tenant ID as the shard key to isolate data per tenant.

- Scalability: Choose a shard key that will allow your database to scale as your data grows.

As for the cutoff date, the information I have is up to date as of September 2021.

User:

Do you know if there is a difference between online transaction processing workloads and online analytical processing workloads when choosing the distribution strategy? Again, please keep the response short.

GPT:

Yes, there are differences:

- Online Transaction Processing (OLTP): For OLTP workloads, which are typically write-heavy and involve short, quick transactions, it's beneficial to choose a shard key that distributes writes evenly across nodes and aligns with the most common query patterns.

- Online Analytical Processing (OLAP): For OLAP workloads, which are read-heavy and involve complex analytical queries, it's often beneficial to choose a shard key that co-locates related data to minimize data transfer during query execution. This can improve performance for large, complex queries.

User:

In Citus, what can you tell me about the difference between co-located and repartition joins? Please keep the response short and simple... in 1-2 sentences.

GPT:

- Co-located Joins: In Citus, co-located joins occur when related data from different tables is stored on the same node, allowing the join operation to be performed locally without network overhead. This is efficient but requires careful shard key selection.

- Repartition Joins: Repartition joins are used when the data to be joined is not co-located. Citus automatically redistributes the data across the cluster to perform the join, which can be more resource-intensive due to the data shuffling involved.

The excerpt shows one of many possible interactions with GPT-4 on the topic, and you can see that it is already good enough when you ask questions about an advanced topic in Citus. Specifically, GPT-4 knows that:

- data distribution, query patterns and write operations are some key aspects to consider when choosing a distribution strategy,

- the two most common Citus scenarios require different approaches to shard key selection, and,

- when table joins occur between tables that are not co-located, resource-intensive data shuffling takes place.

The next question is whether we can augment this general Postgres and Citus know-how with prompt design and plugins to obtain a shard-key selection specialist.

Specialist GPT: First Attempt at Citus Shard Key Selection

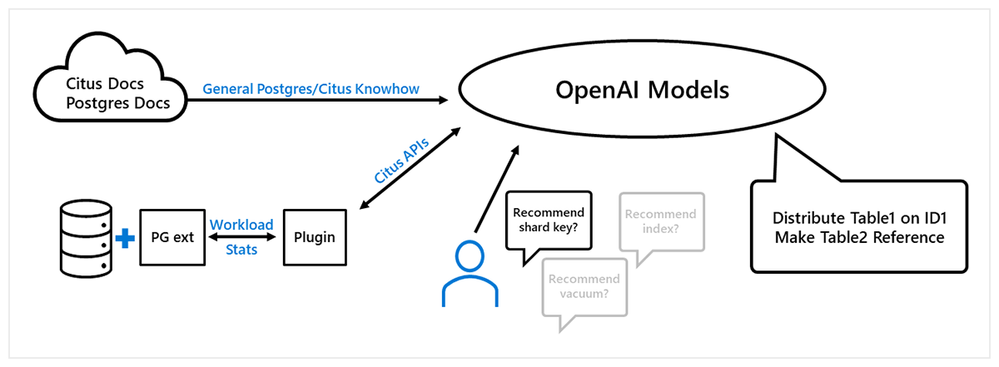

Assume that you have access to a plugin for OpenAI that allows you to feed real-time information from your Azure Cosmos DB for PostgreSQL clusters to GPT-4. Specifically, when you install and enable the plugin, it can fetch your Azure subscription, Azure resource group, and Azure Cosmos DB for PostgreSQL cluster information—as well as certain schema and table data, given a connection string in your Azure Key Vault.

Combining the curated, real-time information coming from the plugin with carefully crafted prompts, you can improve GPT-4’s reasoning and completion skills in the context of Citus shard key selection for your distributed PostgreSQL cluster. Below, you see a set of example user inputs and GPT responses for the two most common Postgres and Citus workflows: OLAP and OLTP.

Example User Input in Online Analytical Processing (OLAP) Workflow

Hi ChatGPT. We own a website that stores user and event information in a Citus cluster. We are interested in certain real-time analytics workloads such as getting aggregated event information. Sometimes this may depend on users’ activities, and other times they are only aggregated across all users. As such, table query patterns such as, e.g., table filter and table join statistics, are important to achieve satisfactory performance.

The two tables mentioned are under the schema “gecrta.” They are in our cluster “chatgpt-cluster” in the resource group “rg-chatgpt-plugin” under the “Marlin - Dev CP” subscription. You should be able to get the connection string from the Key Vault “kv-chatgpt-plugin.”

Please use the installed plugin to get the tables’ statistics, and provide a concise answer with a distribution strategy for our workload together with the reasoning and the accompanying SQL commands.

Short 1-minute video for OLAP workflows, showing how this ChatGPT plugin could work with Azure Cosmos DB for PostgreSQL (aka with Citus on Azure):

Video 1: For OLAP online analytical processing workflows, an example interaction between a user and GPT-4, to help select the Citus shard key (aka the distribution column)

Example User Input in Online Transaction Processing (OLTP) Workflow

Hi ChatGPT. We are a retail company having a lot of warehouses that are geographically distributed. We are using a Citus cluster to manage our online transaction workloads.

We are planning to distribute the warehouse information in the cluster, but we do not know much about picking the right distribution strategy for the other tables. We need you to help us pick the right distribution strategy for our tables under the hammerdb schema.

The cluster in question is the “chatgpt-cluster” in our resource group “rg-chatgpt-plugin” under the “Marlin - Dev CP” subscription, and you can get the connection string from the “kv-chatgpt-plugin” Key Vault.

Please use the installed plugin to get all the tables’ statistics, and provide a concise answer with short reasoning on the distribution strategy choice, and the set of SQL commands to achieve the distribution strategy.

Short 45-second video for OLTP scenarios, showing how this ChatGPT plugin could work with Citus and Postgres

Video 2: For OLTP online transaction processing scenarios, an example interaction between a user and GPT-4, to help select the Citus shard key aka the distribution column.

Above, you see a sneak peek at how one could make GPT-4 a shard-key selection specialist for Postgres and Citus by carefully designing prompts and using the plugin ecosystem. Together, the two strategies exploit GPT-4’s general Postgres and Citus know-how and provide enough context to the model for the problem.

Important Note. Even though the first results look very promising, you should note that these models are probabilistic chat completion models, and hence, further efforts are needed to obtain consistent results.

Extending the Framework to Other Use Cases

In this post, you have learned about a potential application of the use of state-of-the-art chat completion models, together with the plugin ecosystem and prompt design, in a real-world Postgres/Citus use case.

These large language models (LLMs) are trained on vast amount of data and can understand and generate natural language prompts, which makes them apt generalist assistants. To alleviate their limitations such as the information cutoff date and context length, a combination of different strategies can be used.

You have seen that the use of prompt design and ChatGPT plugins in order to make a shard key specialist from GPT-4 can yield promising results. For the two most common Citus scenarios, GPT-4 could pick the correct shard key and provide interesting responses to follow-up questions.

Like many of you, we are excited to see all these new capabilities come to life in the future as plugins and plugin integrations with Azure OpenAI (plugin integrations are now in private preview.) Stay tuned for more updates!

Further Reading

- OpenAI | Prompt Design

- OpenAI | Fine-Tuning

- OpenAI | Chat Plugins

- Microsoft TechCommunity Blog | OpenAI ChatGPT Retrieval Plugin with Postgres

- Citus Docs | Choosing Distribution Column

- Citus Blog | How to benchmark performance of Citus and Postgres with HammerDB on Azure