This post has been republished via RSS; it originally appeared at: New blog articles in Microsoft Community Hub.

OpenAI ChatGPT plugins unlock many potential scenarios interacting with real time systems and data. Particularly retrieval plugins enable ChatGPT to access data sources such as PostgreSQL. Recently Azure Database for PostgreSQL – Flexible Server and Azure Cosmos DB for PostgreSQL added support for the pgvector extension to Postgres.

This post gives you a glimpse of future where - with a combination of Open AI / ChatGPT retrieval plugin (in beta) and pgvector, you will be able to use ChatGPT to store, search, and append knowledge with data from databases created using PostgreSQL on Azure.

In this post, you’ll learn:

- What are “Embeddings” in the context of OpenAI?

- What is pgvector?

- Quick primer on ChatGPT plugins

- How do retrieval plugins work?

- Sneak peek into what Azure OpenAI plugins will be able to do for PostgreSQL

- It is just the beginning

What are “Embeddings” in the context of OpenAI?

The first step is to understand "Embeddings", which are a numerical representation of data. An embedding is an array of floating-point numbers (vectors) that include some semantic meaning of the data. Embeddings are used in various scenarios, often used to encode natural language text, and enables easy comparison of semantic meaning between words and phrases.

For example, “hello world” and “hello this world” have similar semantic meaning. So, when their embeddings are compared using distance metrics, such as cosine similarity, the close semantic meaning will be evident by a small distance between the embeddings.

Here is a sample code and result which can help understand the concept (thanks to ChatGPT for putting this sample code together :smiling_face_with_smiling_eyes:)

And the output is as follows:

The code above does a cosine similarity comparison of “hello world” with other given text. When cosine similarity is used, values closer to 1 in the range of -1 to 1 indicates similar meaning. As seen in the output, the value of “hello this world” is the most closer to “hello world” indicating close semantic meaning compared to the words such “not in this world”, “dog” & “cat”.

What is pgvector?

Pgvector is an open source PostgreSQL extension which helps users store, index, and search over embeddings.

This extension offers a new datatype named ‘vector’ which can then be used to store the embeddings and perform similarity comparisons. Here is an example where you can use the SQL semantics in combination with pgvector datatype and operations to calculate similarity of contents stored in a PostgreSQL database:

Store the embedding of “hello world” and other strings from the example above, into the database and run the cosine similarity search:

The “hello this world” shows up as the one closer to the "hello world", followed by the “not this world” and then “dog” and “cat”.

The pgvector open source extension is supported now in both:

Quick primer on ChatGPT plugins

OpenAI offers plugins that allow ChatGPT to interface with API endpoints to retrieve external information. There is a variety of examples and instructions given in Introduction - OpenAI ChatGPT Plugins. ChatGPT plugins are currently available in beta

How do retrieval plugins work?

The ChatGPT retrieval plugin provides an easy way to read data from a vector database using semantic similarity, and upsert new data into the database. For example, user conversations with ChatGPT, and their embedding representations can be stored for later retrieval. With PostgreSQL as a supported datastore, the retrieval plugin can store and interact with a PostgreSQL database.

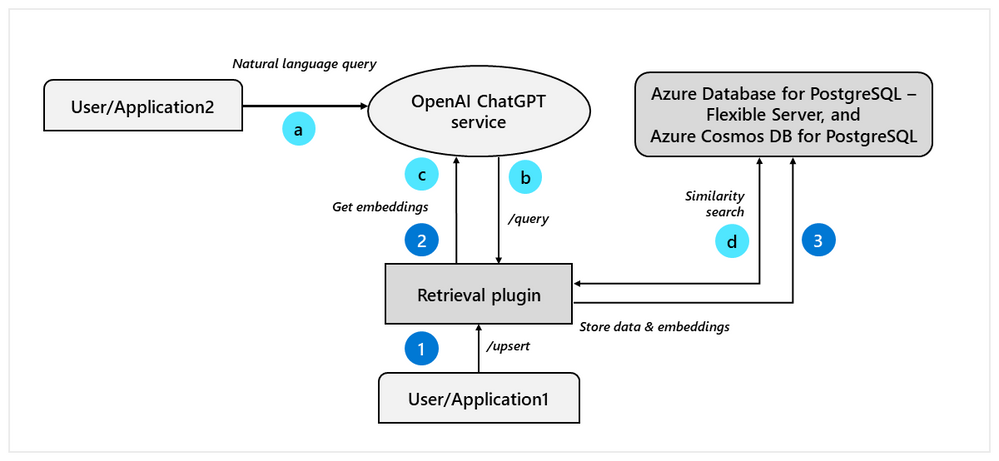

The diagram below depicts the interactions between the retrieval plugin, database and OpenAI/ChatGPT service. The retrieval plugin exposes endpoints such as /query and /upsert, and further details of functionality can be found in the retrieval plugin API Endpoints documentation.

Initially, you may want to input data into the database that ChatGPT can later recall, as you can see in the diagram above:

- (1) To input data into the database,

/upsertendpoint or/upsert-filecan be called. - (2) Within the retrieval plugin this will trigger calls to get embedding from the OpenAI API endpoint.

- (3) After obtaining the embedding, the retrieval plugin stores the input data and embeddings into the backend datastore.

In the context of this post, PostgreSQL is used as a datastore which is managed by Azure Database for PostgreSQL - Flexible Server. The embeddings are stored as vector types offered by the pgvector extension.

OK, now let's explore the ChatGPT portion of the workflow (indicated by letters) in Figure 1's diagram above:

- (a) language queries posed by the user to ChatGPT

- (b) the OpenAI / ChatGPT service will reach out to the

/queryendpoint of the plugin - (c) the plugin then calls OpenAI / ChatGPT to get embeddings for the query text

- (d) the plugin performs a similarity search on the contents in the database

The pgvector extension provides similarity search on vectors and this facility is used by the plugin to find out the similar strings. ChatGPT then appends more context to the results and returns the information to the user.

Here are set of instructions to help you configure the retrieval plugin (that is using pgvector) with OpenAI and Flexible Server in Azure Database for PostgreSQL—in a local setup for testing.

- Create a managed database instance: Managed instance of Azure databases for PostgreSQL Flexible Server can be created using azure cli commands, documentation for which can be found here az postgres flexible-server | Microsoft Learn. Alternatively, the server can be provisioned from the Azure portal by searching for Azure Database for PostgreSQL Flexible Server in Marketplace.

- Allow list pgvector and another extension to try out the test code: The pgvector extension can be allow listed on the Managed PostgreSQL created using set parameter cli command az postgres flexible-server parameter | Microsoft Learn

az postgres flexible-server parameter set --resource-group <resource-group-name> --server-name <postgres-server-name> --name azure.extensions --value "vector,uuid-ossp" - Environment setup for retrieval plugin: Before running the openai/chatgpt-retrieval-plugin, the following environment variables are expected to be configured.

export DATASTORE=POSTGRES export OPENAI_API_KEY=<api-key-generated-on-openai> export PG_HOST=<azure-managed-postgres-server-url> export PG_DATABASE=postgres export BEARER_TOKEN=<token-for-client-authorization> export PG_PORT export PG_USER export PG_PASSWORD - Initialization scripts to setup the tables: Run the init scripts available from chatgpt-retrieval-plugin/setup.md and validate the extension and the documents tables

postgres=> \i init.sql CREATE EXTENSION CREATE TABLE CREATE INDEX CREATE INDEX CREATE INDEX CREATE INDEX CREATE INDEX ALTER TABLE CREATE FUNCTION postgres=> \dx List of installed extensions Name | Version | Schema | Description -----------+---------+------------+------------------------------------------------- azure | 1.0 | pg_catalog | azure extension for PostgreSQL service pg_cron | 1.4-1 | public | Job scheduler for PostgreSQL pgaadauth | 1.0 | pg_catalog | Azure Active Directory Authentication pgcrypto | 1.3 | public | cryptographic functions plpgsql | 1.0 | pg_catalog | PL/pgSQL procedural language uuid-ossp | 1.1 | public | generate universally unique identifiers (UUIDs) vector | 0.4.1 | public | vector data type and ivfflat access method (7 rows) postgres=> \dt List of relations Schema | Name | Type | Owner --------+-----------+-------+---------- public | documents | table | pgvector (1 row) - Running the plugin locally: The python dependency management tool poetry is used by the retrieval plugin to instantiate the local API endpoint.

$ poetry run dev INFO: Will watch for changes in these directories: ['/home/rohan/chatgpt-retrieval-plugin'] INFO: Uvicorn running on http://localhost:3333 (Press CTRL+C to quit) INFO: Started reloader process [1701689] using WatchFiles INFO: Started server process [1701697] INFO: Waiting for application startup. INFO: Application startup complete. - Add content into the datastore using the /upsert endpoint using the local endpoint at http://0.0.0.0:8000/docs. The details for traversal can be found here: API endpoints. Here we input the contents we are going to query against – two strings which talks about the Azure Database for PostgreSQL supported versions and extensions.

curl -X 'POST' \ 'http://localhost:3333/upsert' \ -H 'accept: application/json' \ -H 'Content-Type: application/json' \ -d '{ "documents": [ { "id": "12", "text": "Azure Databases for PostgreSQL supports PostgreSQL versions 11, 12, 13, and 14. The public preview of PostgreSQL 15 will be announced soon.", "metadata": { "source": "file", "source_id": "file", "url": "www.localhost.com", "created_at": "05-18-2023", "author": "xyz" } } ] }' {"ids":["12"]} -- output curl -X 'POST' \ 'http://localhost:3333/upsert' \ -H 'accept: application/json' \ -H 'Content-Type: application/json' \ -d '{ "documents": [ { "id": "13", "text": "Azure Databases for PostgreSQL supports several extensions. Some of these include TimeScaleDB, PostGIS, PgAnonimizer, PgVector.", "metadata": { "source": "file", "source_id": "file", "url": "www.localhost.com", "created_at": "05-18-2023", "author": "xyz" } } ] }' {"ids":["13"]} -- output - Register the local plugin with instructions from: https://platform.openai.com/docs/plugins/getting-started/running-a-plugin

- Query ChatGPT and the data retrieved via the plugin will start to appear in the context.

Figure 2: Query ChatGPT using retrieval plugin on supported PostgreSQL versions.

Figure 3: Query ChatGPT using retrieval plugin on supported extensions.

As you can see ChatGPT appends the knowledge it gains using retrieval plugin.

Sneak peek into what Azure OpenAI plugins will be able to do for PostgreSQL

At Build, Azure OpenAI is announcing a private preview for plugins. Below you can see a sneak peek of using PostgreSQL as retrieval plugin datastore with Azure OpenAI. On posting the question the retrieval plugin was triggered. The plugin queries the PostgreSQL database and returns relevant results to the service. The model used the results from the query to give a response grounded in data entered earlier in the database.

Stay tuned for a screenshot !

It is just the beginning

We are just at the beginning of a quantum shift in AI and Data. Some features and functionality mentioned in this post are currently in beta/private preview mode. As we go through this journey to fully operationalizing new workloads to production, there are interesting challenges of data privacy, performance, and enterprise security to address. There is a lot more to come. Until next time, goodbye, and happy ChatGPT-ing :smiling_face_with_smiling_eyes:!

Where to learn more?

Here are a few links where you can learn more on the topics relevant to this post:

- Azure Database for PostgreSQL – Flexible Server

- Azure Cosmos DB for PostgreSQL

- GitHub - pgvector/pgvector: Open-source vector similarity search for Postgres

- ChatGPT plugins (openai.com)

- GitHub - openai/chatgpt-retrieval-plugin: The ChatGPT Retrieval Plugin lets you easily find personal or work documents by asking questions in natural language.

- Azure OpenAI Service – Advanced Language Models | Microsoft Azure