This post has been republished via RSS; it originally appeared at: AzureCAT articles.

In our eighth blog in this series, we'll continue to explore the Azure Data Architecture Guide. The previous entries for this blog series are:

- Azure Data Architecture Guide – Blog #1: Introduction

- Azure Data Architecture Guide – Blog #2: On-demand big data analytics

- Azure Data Architecture Guide – Blog #3: Advanced analytics and deep learning

- Azure Data Architecture Guide – Blog #4: Hybrid data architecture

- Azure Data Architecture Guide – Blog #5: Clickstream analysis

- Azure Data Architecture Guide – Blog #6: Business intelligence

- Azure Data Architecture Guide – Blog #7: Intelligent applications

Like the previous post, we'll work from a technology implementation seen directly in our customer engagements. The example can help lead you to the ADAG content to make the right technology choices for your business.

Data warehousing

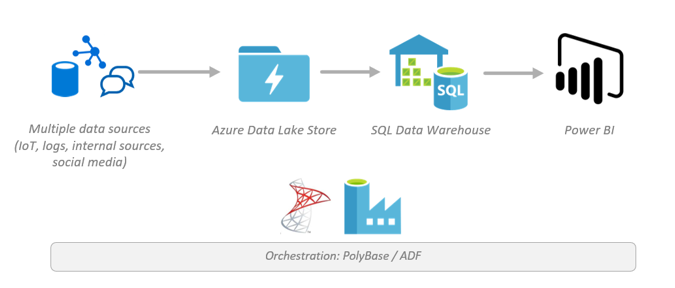

Here we see store data coming from multiple sources into Azure Data Lake Storage, in their native format. (Azure Data Lake Storage Gen 2 is recommended.) Azure SQL Data Warehouse directly queries against the data with a combination of external tables and schema on read capabilities through PolyBase. Use Azure Data Factory to store the data you need within your warehouse, and quickly analyze and visualize the combined data with Power BI.

Highlighted services

- Azure Data Lake Storage Gen 1 (legacy)

- Azure Data Lake Storage Gen 2 (recommended)

- Azure SQL Data Warehouse

- PolyBase

- Azure Data Factory

- Power BI

Related ADAG articles

- Traditional RDBMS workloads

- Cross-cutting concerns

- Technology choices

See Also

The Reference Architecture, Enterprise BI in Azure with SQL Data Warehouse, implements an extract, load, and transform (ELT) pipeline that moves data from an on-premises SQL Server database into SQL Data Warehouse and transforms the data for analysis.

The Azure Architecture Center also features two related example scenarios that demonstrate solutions using these technologies:

- Data warehousing and analytics for sales and marketing

- Hybrid ETL with existing on-premises SSIS and Azure Data Factory

In addition, the Modern Data Warehouse solution ingests the data sources through Azure Data Factory, combining your data to Azure Blob Storage. It uses Azure Databricks to prep and train cleansed and transformed data, to be moved to Azure SQL Data Warehouse (which acts as the data hub). Like the Reference Architecture above, this solution leverages Azure Analysis Services for data modeling (then on to Power BI for your visualizations).

For more information about the data movement, see the following articles:

- Load data from Azure Data Lake Storage Gen1 to SQL Data Warehouse

- Copy data to or from Azure Data Lake Storage Gen1 by using Azure Data Factory

- Copy data to or from Azure Data Lake Storage Gen2 Preview using Azure Data Factory (Preview)

- Copy data to or from Azure SQL Data Warehouse by using Azure Data Factory

- Tutorial: Extract, transform, and load data using Azure Databricks

- Choosing a data pipeline orchestration technology in Azure

For more information about stream processing and Azure Data Bricks, see our reference architecture, Create a stream processing pipeline with Azure Databricks, and our ADAG article, Choosing a stream processing technology in Azure.

For more information about Azure Analysis Services and advanced analytics, see our last blog post in this blog series, Azure Data Architecture Guide – Blog #6: Business intelligence, our ADAG article, Choosing an analytical data store in Azure, our two Reference Architectures, Enterprise BI in Azure with SQL Data Warehouse and Automated enterprise BI with SQL Data Warehouse and Azure Data Factory, our Example Scenario Data warehousing and analytics for sales and marketing, and our two Solutions, Advanced analytics on big data and Real-time analytics.

Please peruse ADAG to find a clear path for you to architect your data solution on Azure:

AzureCAT Guidance

"Hands-on solutions, with our heads in the Cloud!"